Last week, AMD’s Radeon RX 6600 graphics card officially launched and became available to the masses, under the veil of a more budget-friendly option for gamers content with gaming at just Full HD (1920 x 1080). Just like the 6600XT, AMD is not releasing a version of the card with a reference cooler and is leaving the task entirely to its AiB partners to deal with.

In this case, the custom-cooled 6600 I have in my lab is one from Gigabyte’s Eagle lineup and, both to my knowledge and surprise, is the only 6600 to be fitted with a triple-fan cooling solution. Naturally, the question that arises from this is: does this actually help in keeping the card cooler and perform better?

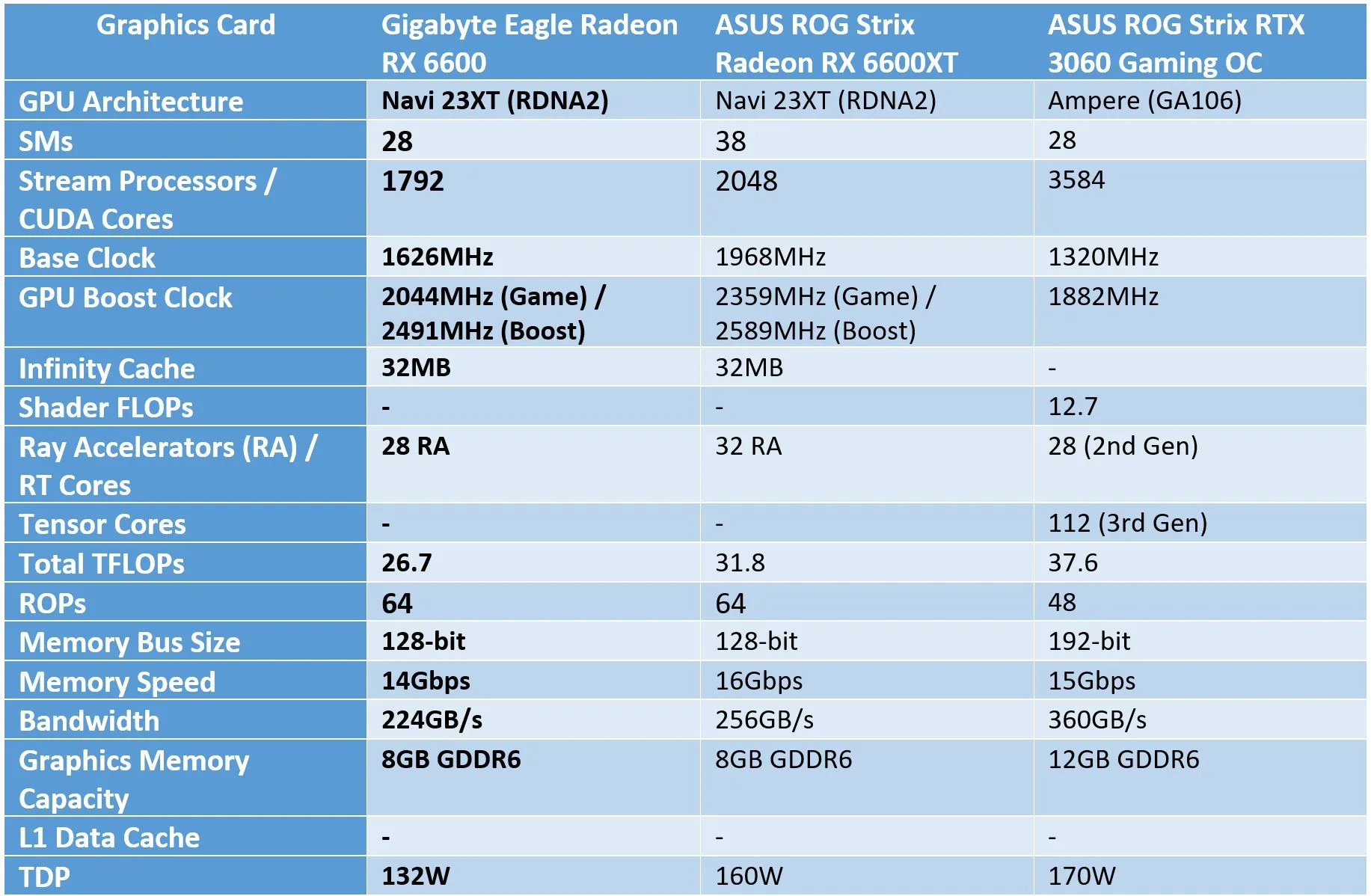

Specifications

Design

Compared to the previous cooler shroud design for its Eagle series, the current look on this 6600 looks more streamlined. Personally, I like it better, simply because I wasn’t a big fan of the uneven, lopsided spine that was part and parcel of Gigabyte’s earlier Eagle cooler shroud design.

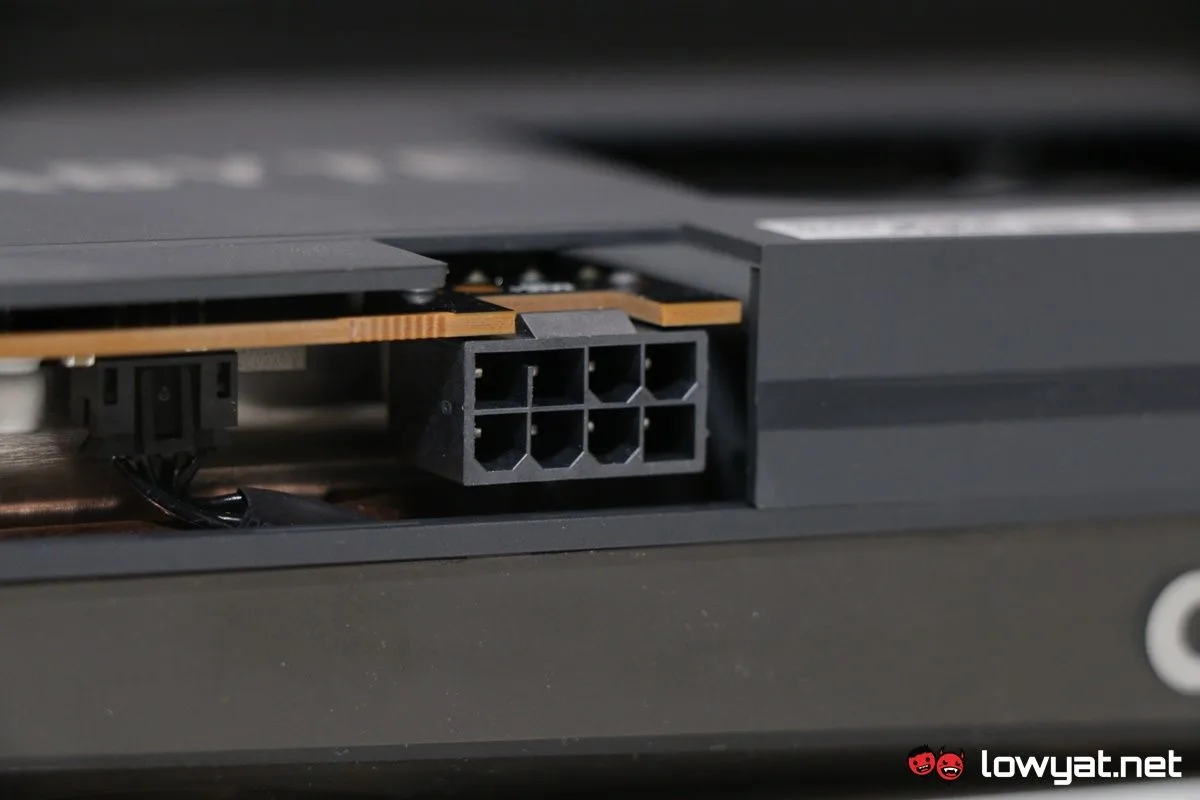

Moving on, if you’re a fan of Gigabyte’s cooling solution, you’ll be happy to know that the cooling solution for this variation of the 6600 is basically a carbon copy of the 6600XT in the same lineup. That means the same screen cooling; the same heatsink that over-extends beyond the length of the card’s PCB; the same Windforce 3X cooling system; even the same 3D Active Fans – Gigabyte’s own fancy name for what are essentially zero RPM fans – that spin in alternating directions. In regards to the last point, the fans are even lubricated with the same graphene nano lubricant.

Flip the card around and you’ll see a backplate with an exposed section at the tailend of the card, giving you a glimpse of the massive heatsink that I mentioned earlier. Another surprise with the Eagle 6600 though is that, despite all that metal and hard plastic, the card is actually not all that heavy.

In terms of output, the Eagle Radeon RX 6600 comes with two DisplayPort 1.4 ports and two HDMI 2.1 ports, which is plenty for a card of this calibre and for the Full HD gamer that also has a penchant of operating in a multi-display scenario.

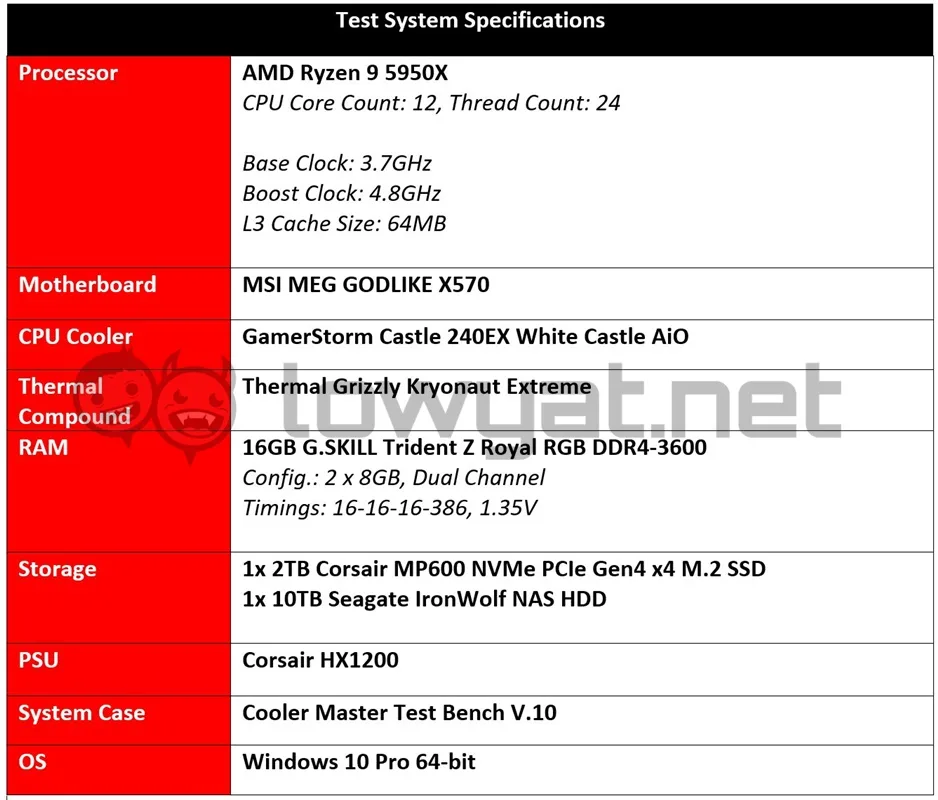

Testbed

My testbed in testing the 6600 remains the same as with previously tested graphics cards. That means the same AMD Ryzen 9 5950X, 16GB DDR4-3600MHz RAM, as well as the same MSI MEG GODLIKE X570 motherboard. One factor to take note of is that throughout the review process, I tested the card without activating AMD’s Smart Access Memory (SAM) feature.

Also, considering how the GPU is technically aimed at gamers who have no intentions of gaming at resolutions beyond1080p, the gaming benchmarks listed in the following section will simply list the card’s performance at just that resolution. Lastly, for comparison sake, I am also benching the card against the ASUS ROG Strix variants of the 6600XT and GeForce RTX 3060, both of which I have previously reviewed.

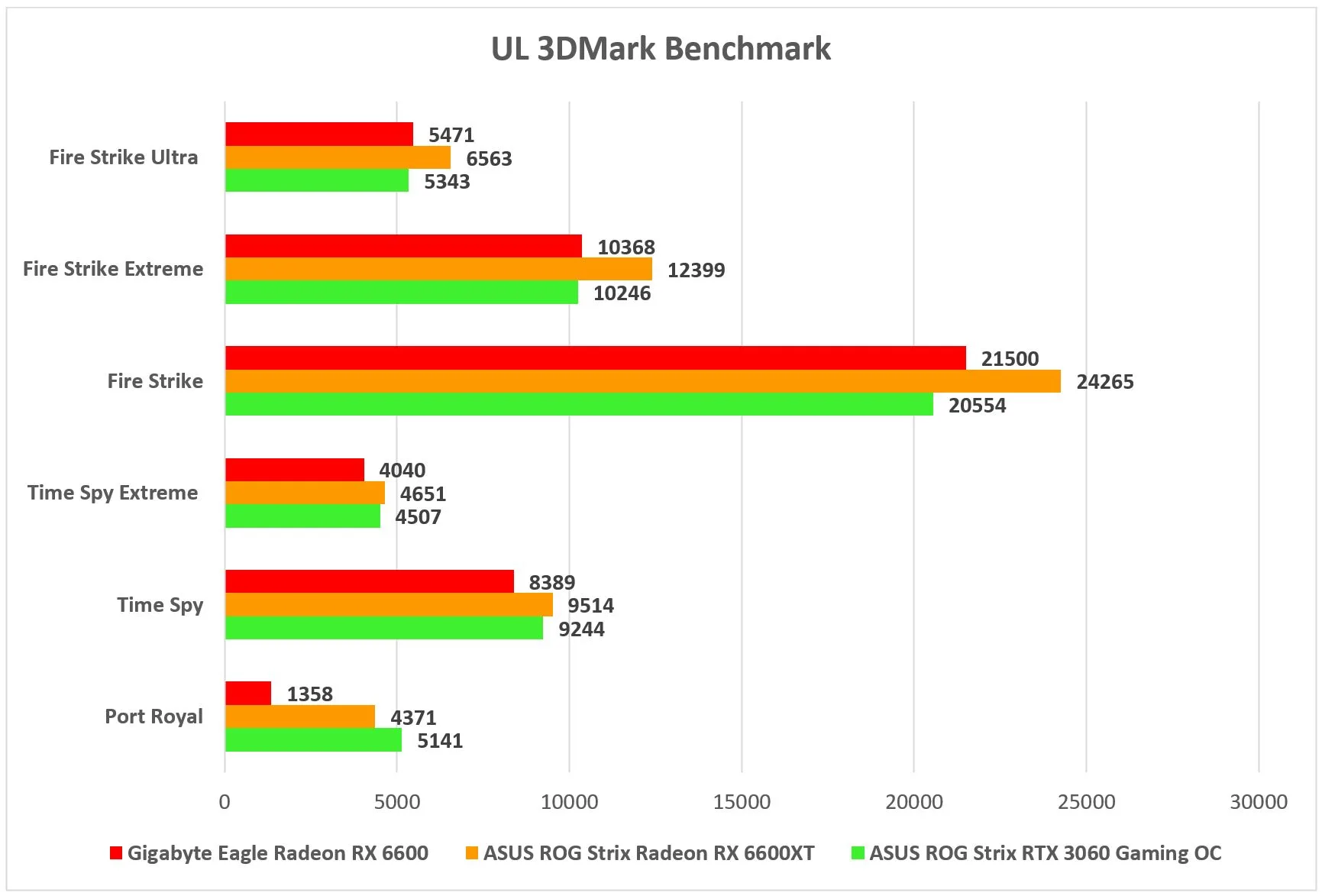

Benchmarks

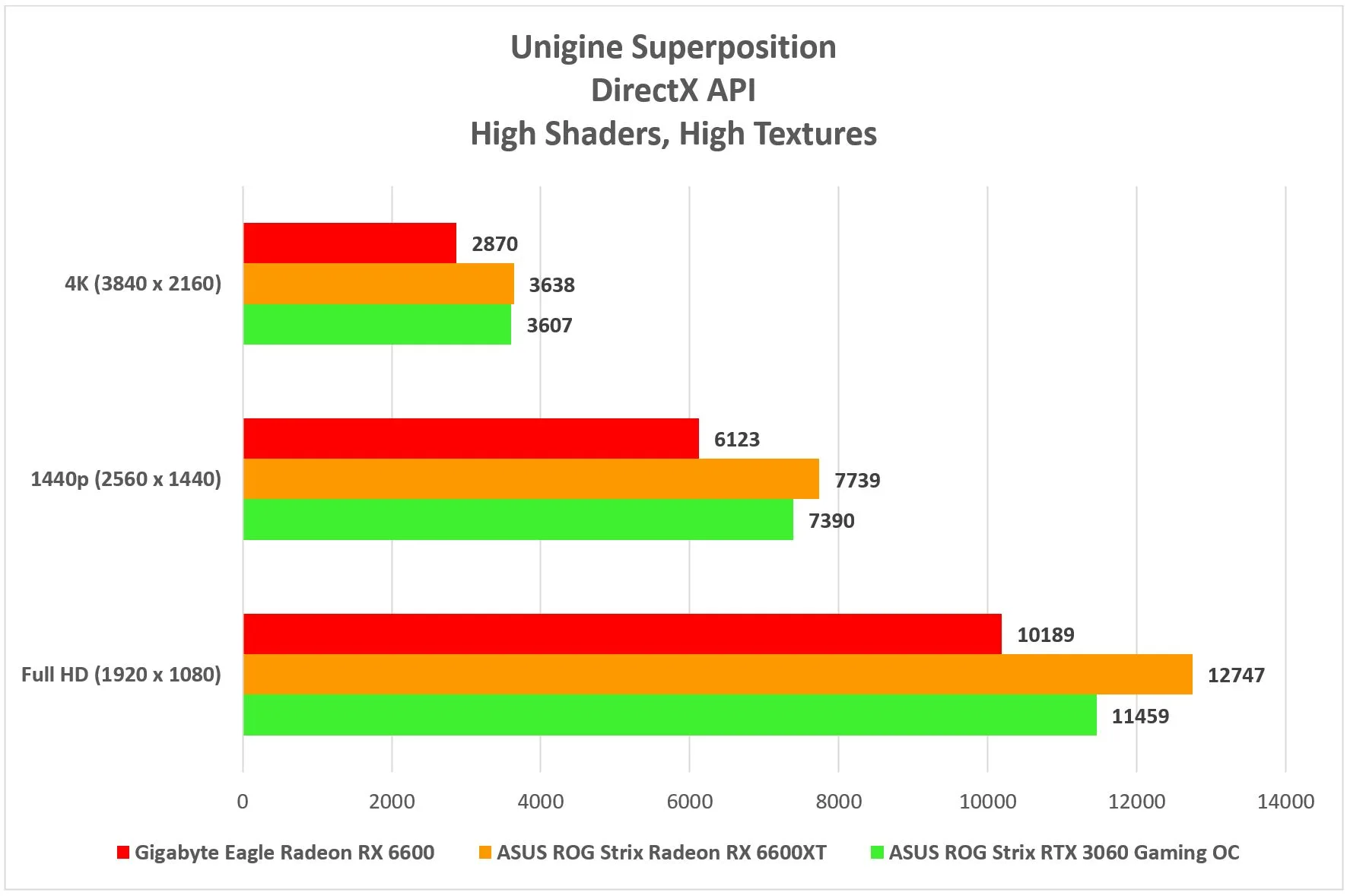

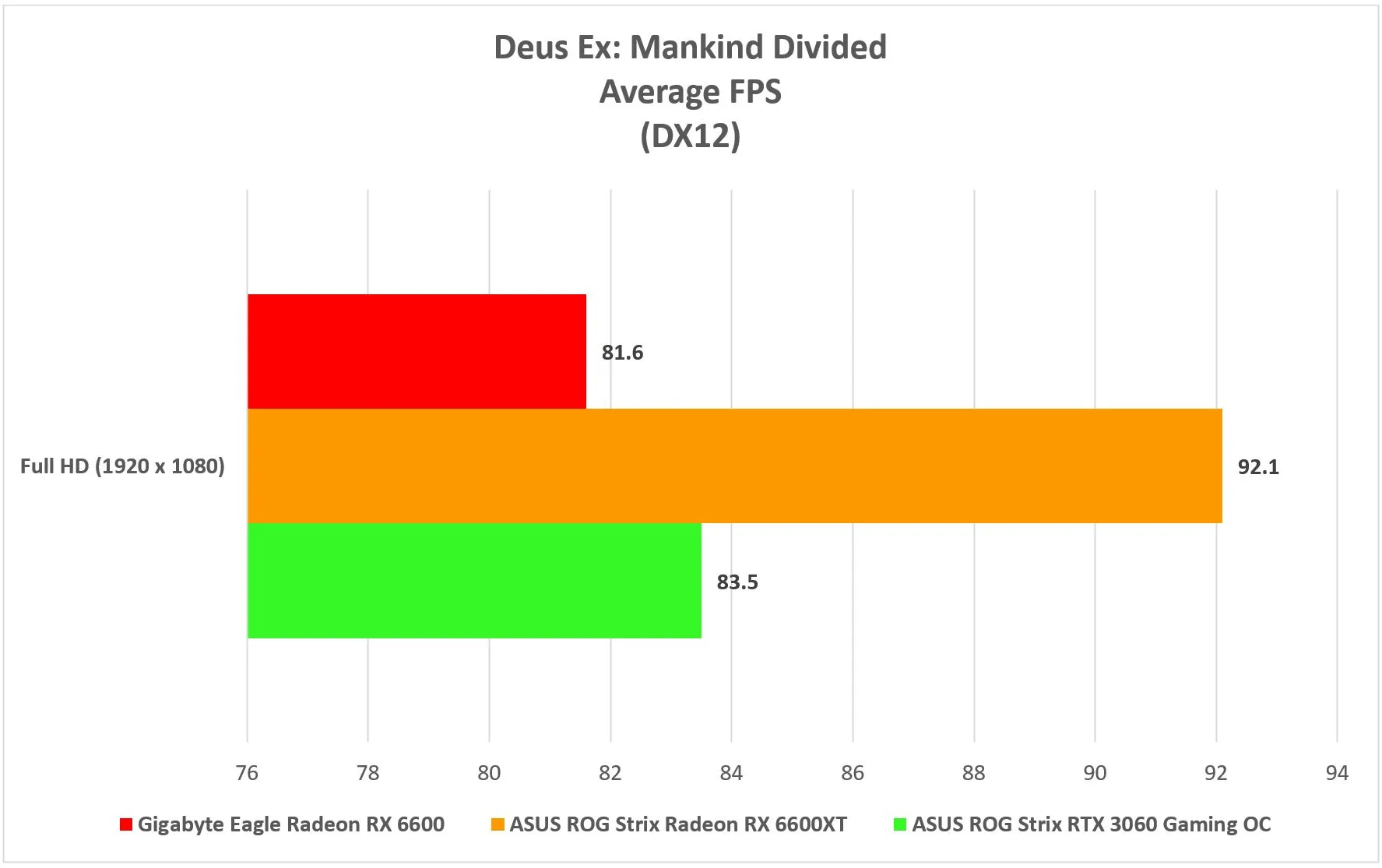

In the synthetic benchmark portion of the review, the 6600 performs more or less in the expected ballpark. As a “lesser” variant of the 6600 series, the card clearly plays catch-up to the 6600XT but is surprisingly trading blows with the RTX 3060 on all fronts, save for one. On that note, the 6600 is clearly not built to handle ray-tracing as efficiently as the other two GPUs and it shows in its appallingly low score for the Port Royal test.

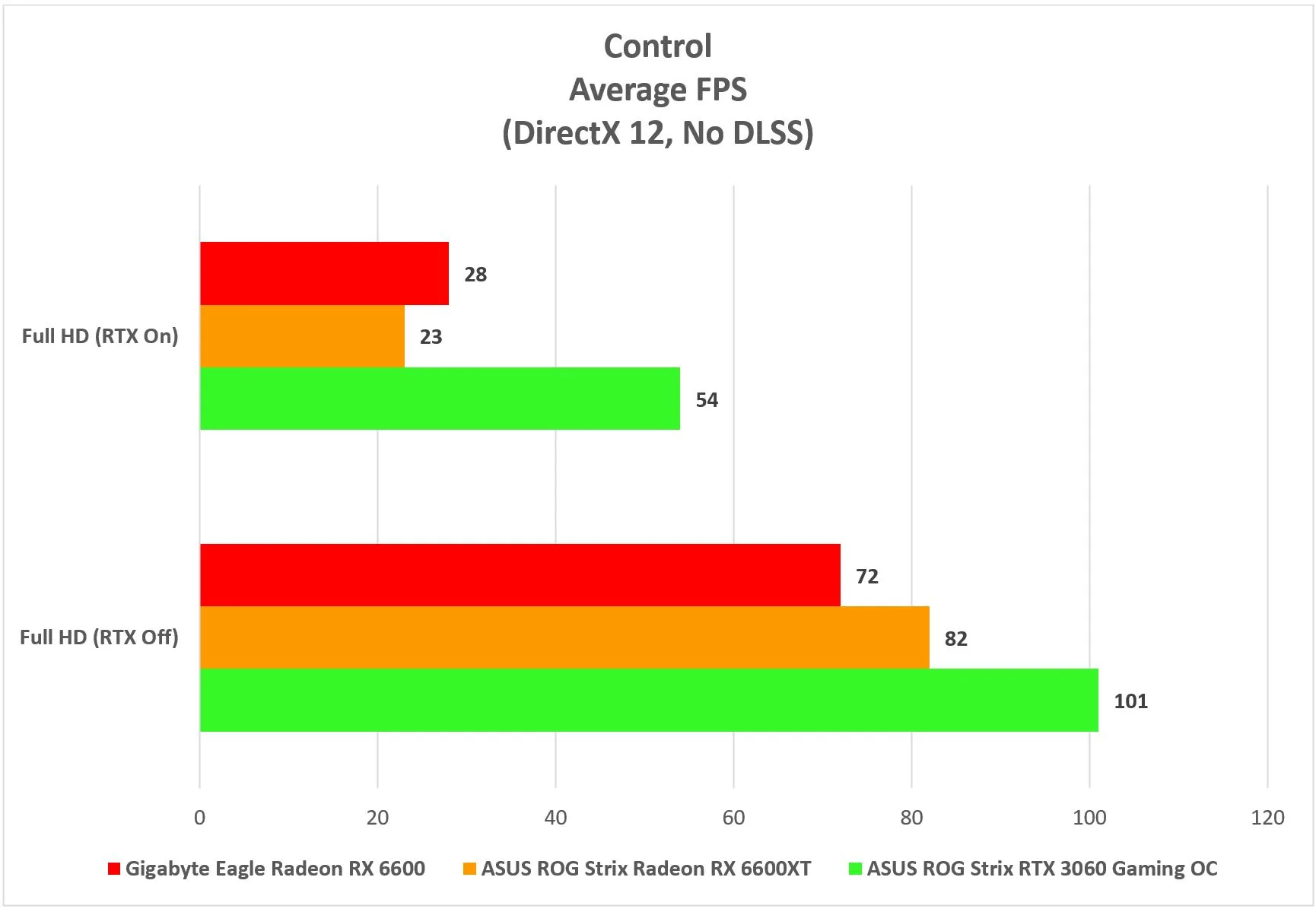

In the realm of gaming, that erratic performance with ray-tracing carries forward in some RTX and ray-tracing-capable titles. In Control, the 6600 struggles to stay above the 30 fps line and that’s during the calm before the storm (read: in a safe space). Upon engaging with monsters and getting into a firefight, the frames actually dip a little further, but mercifully, it’s not to the point that is unbearable.

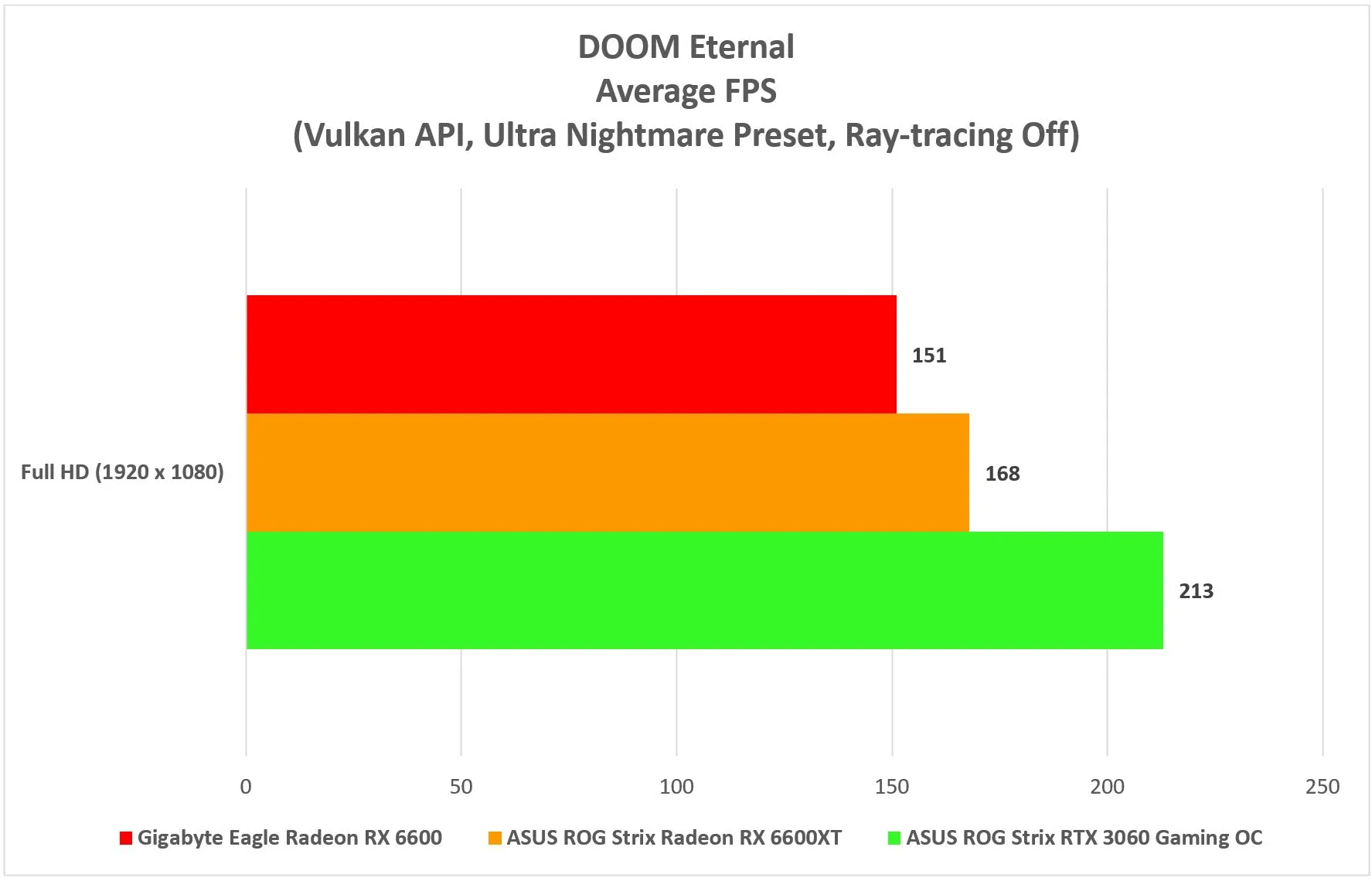

And forget trying to run DOOM Eternal with ray-tracing turned on. With the feature enabled, the lower-than-average framerate just made the experience unenjoyable and with this GPU specifically, there are multiple instances where the frames just dipped into single-digit territory. Of course, with ray-tracing disabled, the card is plenty capable of keeping those frames above the 100 fps mark.

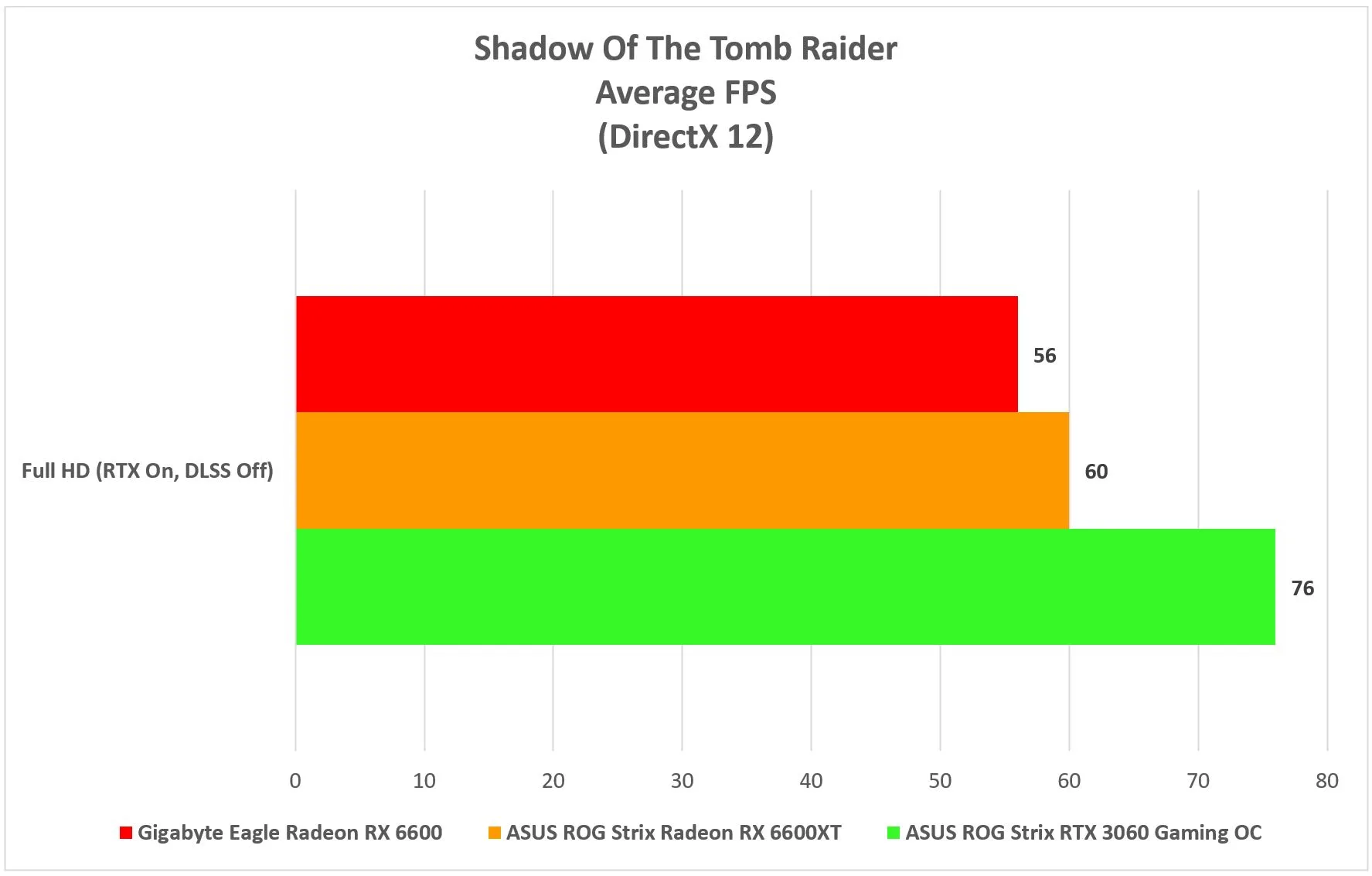

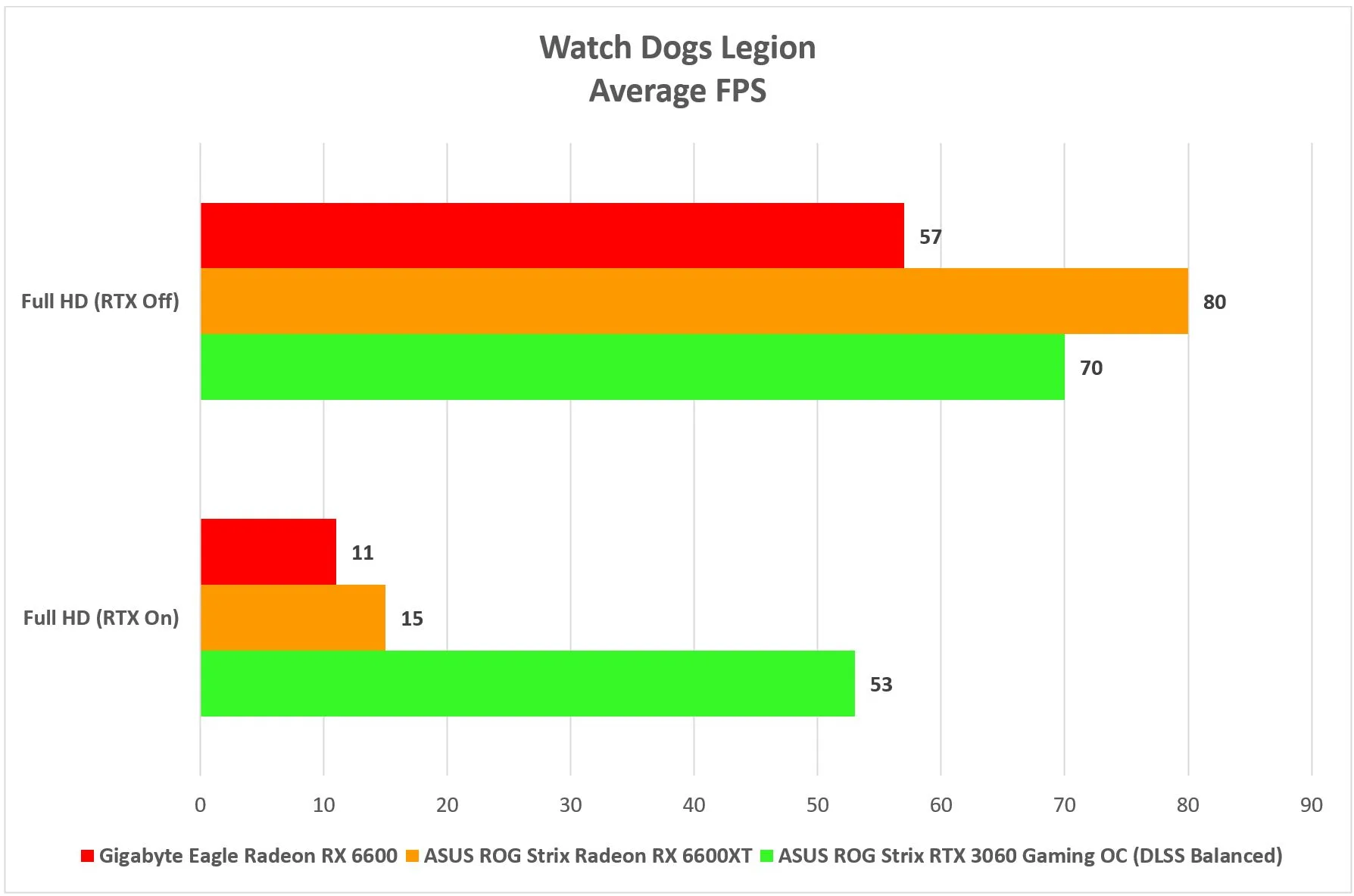

The only title that the 6600 is able to churn out more than 30 fps on average with ray-tracing enabled was Shadow of the Tomb Raider. On average, the game’s benchmark was holding an acceptable 50 fps on average but peaked at 94 fps at the tail end of the in-game benchmark. As for Watch Dogs Legions, running the game with ray-tracing on and without the aid of upscaling simply doesn’t cut it, with the game crawling at 11 fps. Again, with ray-tracing off, the 6600 was free do its job and, once again, deliver acceptable frame rates.

Temperature And Power Consumption

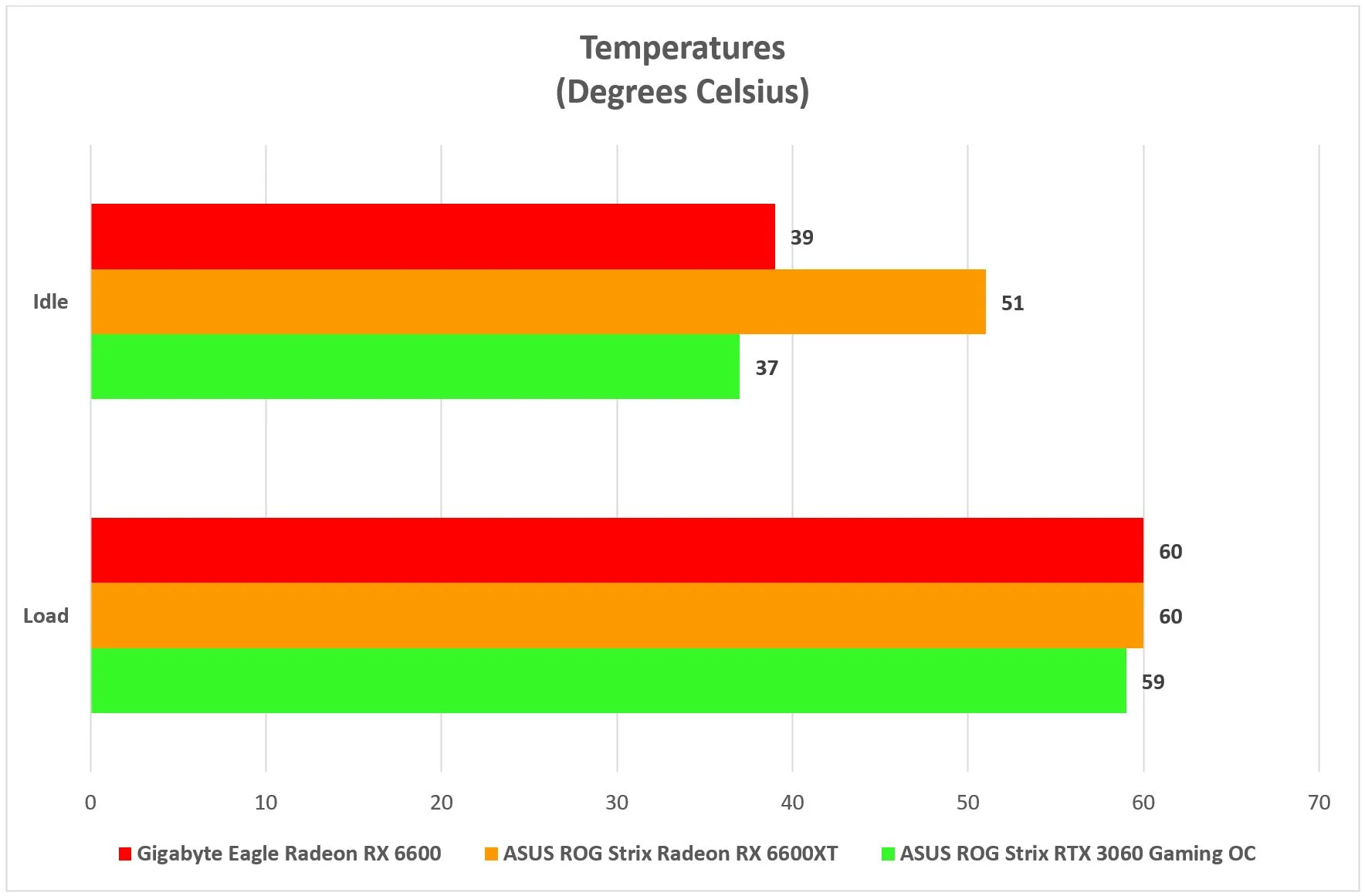

In contrast to the 6600XT and RTX 3060, the 6600’s peak operating temperature of 60°C seems par for the course. That said the card also seems to run slightly hotter than its NVIDIA equivalent, and that’s with the ambient temperature being kept at a chilly 20°C.

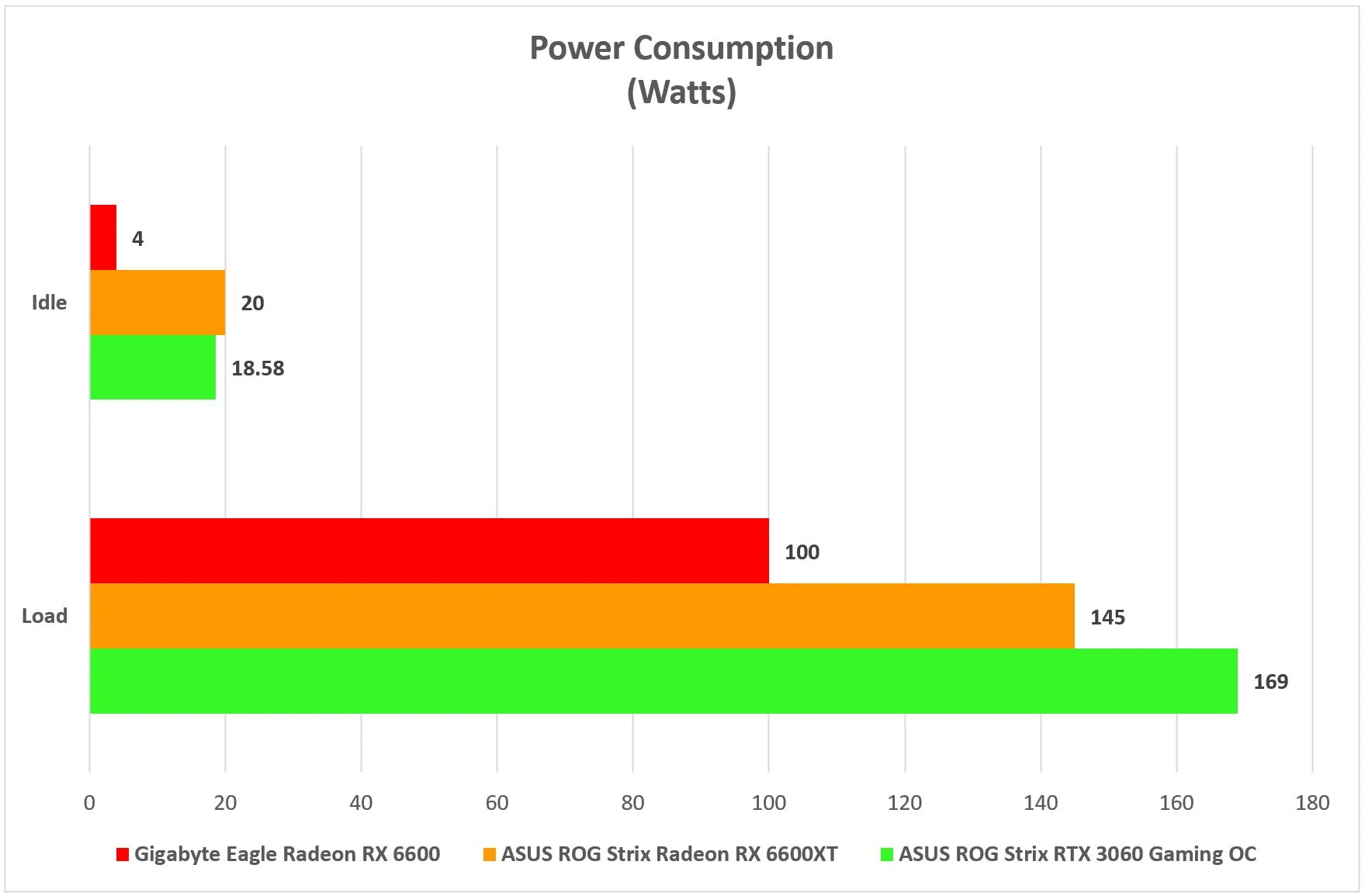

What is impressive about the 6600, though, is its power consumption. On paper, AMD says its entry-level GPU pulls in 132W at most, but throughout the testing period, the card never seemed to pull more than 100W from the wall, at any given time. Even when idling, its power consumption stayed within single-digit territory, making it one of the more power-efficient cards I have tested, to-date.

Conclusion

At a starting price of US$329 (~RM1369), the Radeon RX 6600 is clearly AMD’s new budget-friendly RDNA2 king, but we’d be remiss if we also didn’t say that, at this point, we’re not quite sure as to what message AMD is trying to send to the budget-conscious PC gamer. By comparison, the card only trails behind the 6600XT by a small margin and if you’re not fussed about ray-tracing, there’s little to suggest against getting this GPU and simply forgo the beefier “XT” sibling.

On that note, this Gigabyte Eagle Radeon RX 6600 retails at an SRP of RM2139 which, in my personal opinion, is still a little on the high side. Unfortunately, it is unlikely that we will see a price reduction anytime soon, and of course, there is also the subject of the GPU’s availability, what with the ongoing global chip shortage.