NVIDIA’s Ada Lovelace has had a bit of time to sit and stew in the market with its flagship card, the GeForce RTX 4090 and it goes without saying that, at this point, it is the current king-of-the-hill for GPUs. Today, the GPU brand is officially pulling back the curtains on its next best card, the GeForce RTX 4080.

In this review, I will be looking at the RTX 4080 FE (Founders Edition) specifically and, as always, just see how much of an uplift the card has over the last generation.

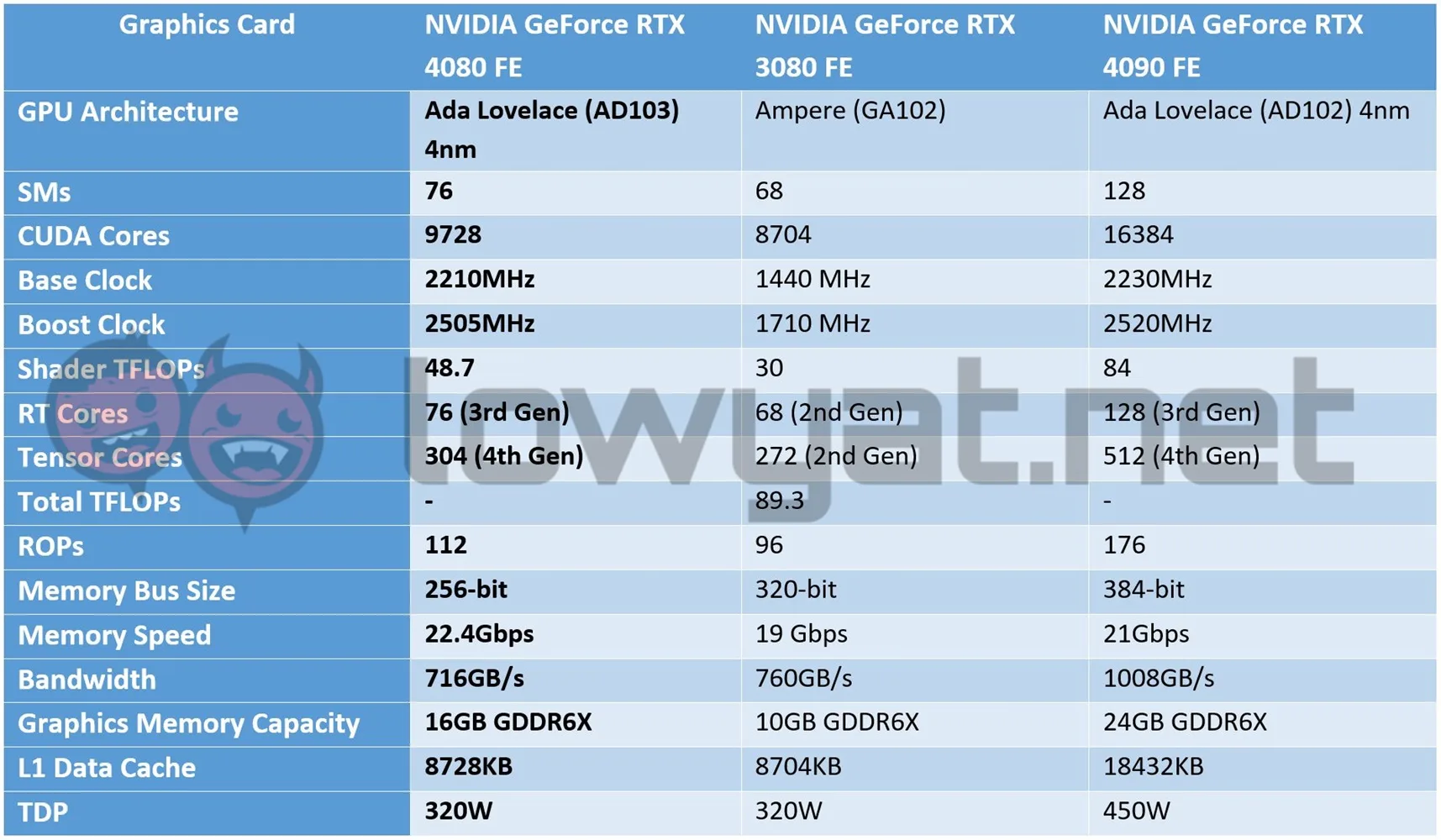

Specifications

Design

I’m not going to beat around the bush here: I had initially expected the RTX 4080 FE to be around the same size as the RTX 3080 FE that launched two years ago. Instead, NVIDIA opted to make it the spitting image of the RTX 4090 FE, with the only difference separating its from its more powerful sibling being its weight.

To that end, that basically means the same, slightly redesigned cooling shroud, the improved Dual Axis Flowthrough fan orientation, and the same see-through grille-style fins that envelops the entirety of the RTX 4080 FE’s PCB.

Beneath the hood, the RTX 4080 FE is running on a watered-down Lovelace GPU, otherwise known as the AD103. Compared to the RTX 3080 though, the card has eight more Stream Multiprocessors (SMs), 1024 more CUDA cores at 9728, and 48.7 Shader TFLOPs. As with all Lovelace GPUs, it’s also fitted with 76 3rd generation RTX Cores and 304 4th generation Tensor Cores. More importantly, it also has 16GB GDDR6X graphics memory, which is 6GB more than the original RTX 3080.

Beneath the hood, the RTX 4080 FE is running on a watered-down Lovelace GPU, otherwise known as the AD103. Compared to the RTX 3080 though, the card has eight more Stream Multiprocessors (SMs), 1024 more CUDA cores at 9728, and 48.7 Shader TFLOPs. As with all Lovelace GPUs, it’s also fitted with 76 3rd generation RTX Cores and 304 4th generation Tensor Cores. More importantly, it also has 16GB GDDR6X graphics memory, which is 6GB more than the original RTX 3080.

Another distinguishing feature of the RTX 4080 FE is the power needed to run it. To be clear, you still need a 16-pin PCIe Gen5 power connector – frankly, there’s no running away from it – for it to operate, but instead of a four-way 8-pin adapter, you’ll only need three of them.

Ports-wise, the RTX 4080 FE gets the standard three DisplayPort 1.4 ports and one HDMI 2.1 port, both capable of supporting up to 8K resolutions.

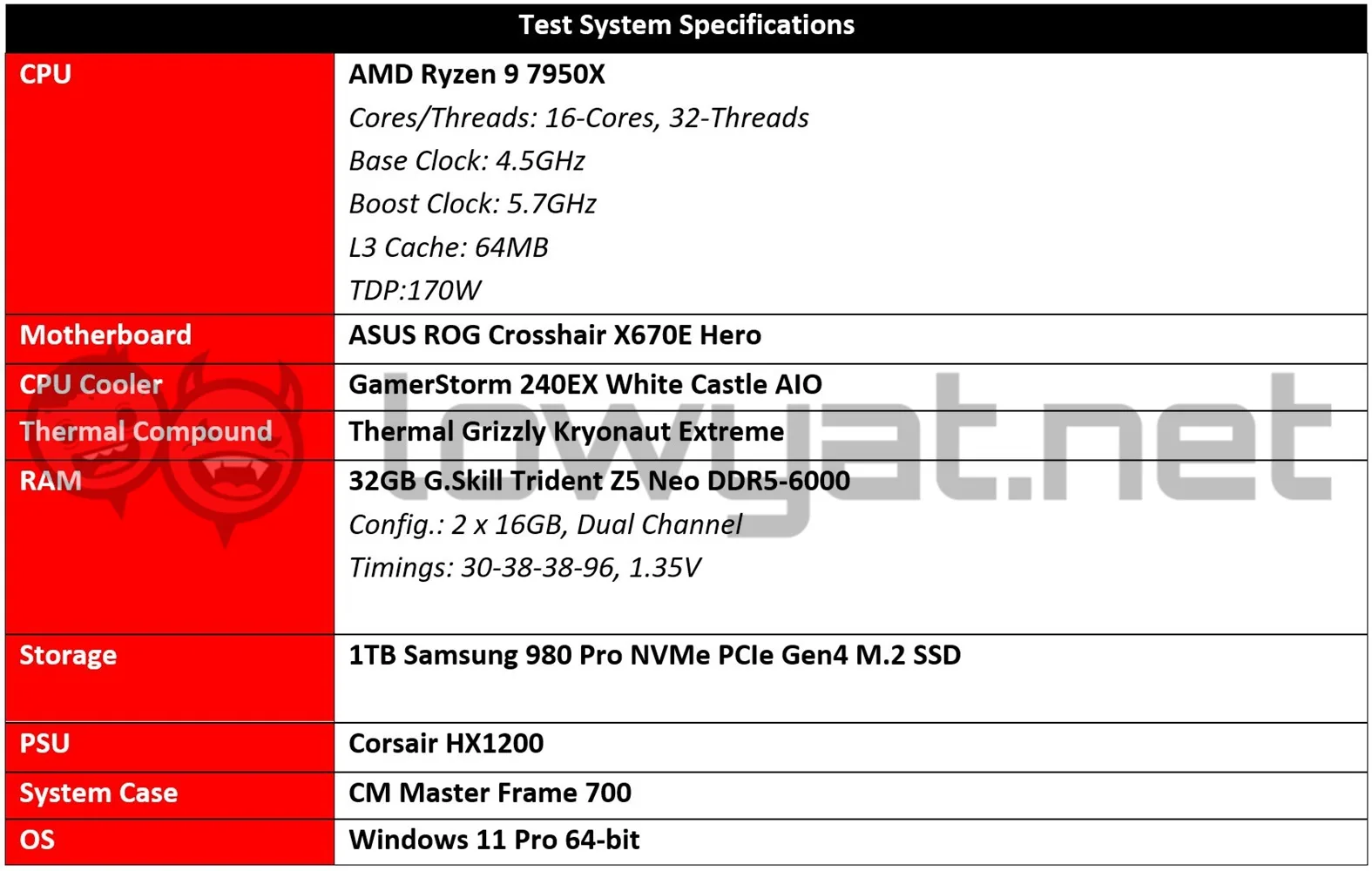

Testbed

To see just how much of an improvement the RTX 4080 FE is over the last generation, I will be pitting the card against its direct Ampere predecessor, the RTX 3080 FE. I know, many of you will obviously be saying that I should be benching the card against the RTX 3080 Ti instead, but the reason that isn’t the case is here simple: I don’t have the latter, mainly because NVIDIA never provided a permanent unit to me. On account of the severe chip shortage that plagued the past couple of years, I suspect.

I will also be retesting the RTX 3080 FE with my updated AMD Ryzen 7000 Series testbench, comprising the components listed in the table above, along with the GeForce drivers version 526.72, provided by NVIDIA at the time of this review. Of course, this is all to ensure that the performance metrics are up to date.

Benchmarks

I think it safe to say that whatever NVIDIA planned with Lovelace, it can be described as nothing short of – as one of the GPU brand’s fellow put – magic. Just as the RTX 4090 pulled ahead of the RTX 3090 Ti, we’re looking at a repeat performance for the RTX 4080 FE versus our RTX 3080.

All across the board, you can see the RTX 4080 FE leaving the RTX 3080 FE in the dust, especially when it comes to the synthetic benchmark portion of our review. Yes, it is a brand new architecture that we’re looking at here, but that it has 6GB GDDR6X graphics memory more than its predecessor also plays an obvious role in its ability to run and perform faster.

Having said that, the drivers that were provided by NVIDIA did also provide a performance boost of sorts to the RTX 3080. That is, in comparison to when I first tested the card when it first launched, two years ago.

I’m not joking when I say that the RTX 4080 FE is powerful across the board and that it runs rings around its predecessor either. In the real-world benchmark portion of the review, the card isn’t just owning every, single title on my games list: it is a dominating force, with the RTX 3080 barely able to hold a candle up to it, and even then, it isn’t by a huge margin, or even at 4K resolution.

Also, if some of you are wondering why the RTX 3080’s stats for Cyberpunk 2077 with DLSS 3.0 is empty, that’s simply because the press build of the game with NVIDIA’s AI-trained upscaling technology didn’t work with it. As mentioned in my review of the RTX 4090 FE, the feature is tied to the new Lovelace architecture for now, which in my opinion is a good and bad thing.

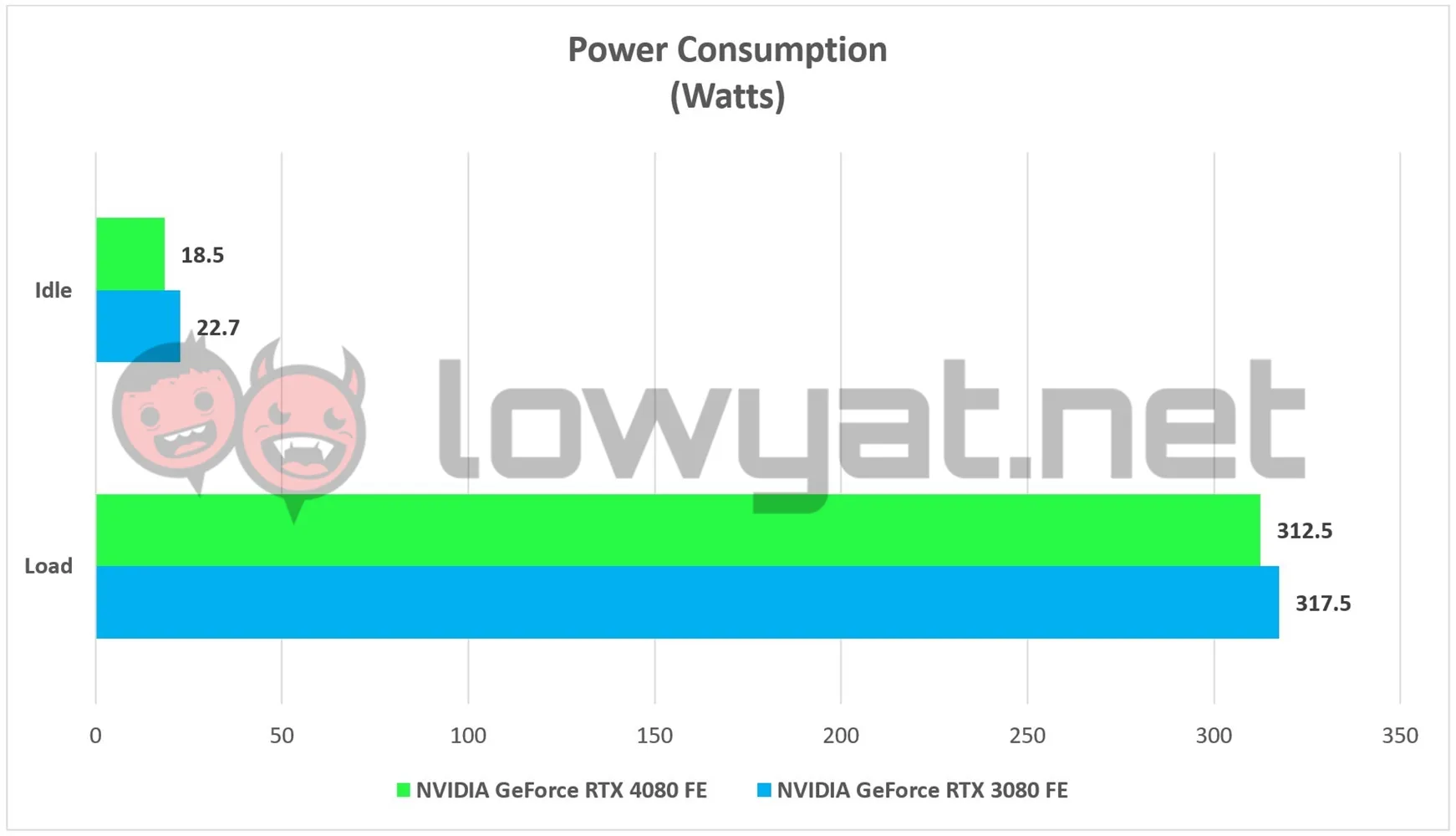

Temperature And Power Consumption

Another fascinating aspect of the RTX 4080 FE is that it is running on the same 320W TDP as the RTX 3080 FE. However, as you can see the graph above, it consumes a little less power than its Ampere-based predecessor, which makes its every so slightly more power efficient on paper. But honestly, this is basically just me nitpicking at the finer details.

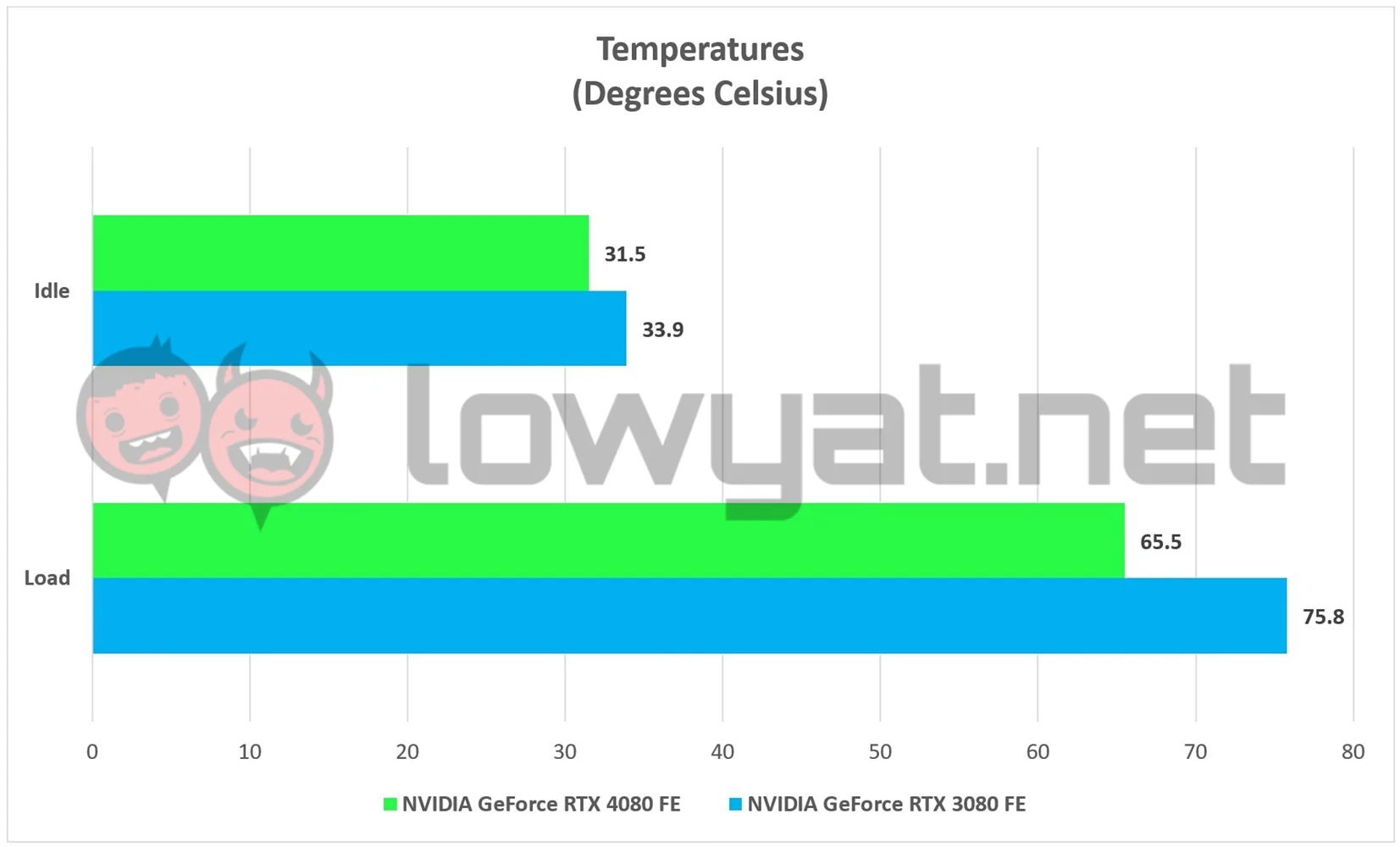

Another fascinating aspect of the RTX 4080 FE is that it is running on the same 320W TDP as the RTX 3080 FE. However, as you can see the graph above, it consumes a little less power than its Ampere-based predecessor, which makes its every so slightly more power efficient on paper. But honestly, this is basically just me nitpicking at the finer details.  But where the RTX 4080 FE does impress me is in the way it handles its temperature at full whack. Clearly, that massive hunk of metal that is called its heatsink works, as the card’s maximum operating temperature is 10°C lower on average, compared to the RTX 3080 FE.

But where the RTX 4080 FE does impress me is in the way it handles its temperature at full whack. Clearly, that massive hunk of metal that is called its heatsink works, as the card’s maximum operating temperature is 10°C lower on average, compared to the RTX 3080 FE.

Conclusion

Back in 2020, I said in my review of the RTX 3080 FE that the card was then, the king of the hill for 4K gaming, and the RTX 2080 Ti before that. In the case of NVIDIA’s GeForce RTX 4080 FE, this card is nothing short of breathtaking in its delivery, both in gaming and productivity but more to the point, it, along with the RTX 4090, is setting the bar for other cards in its class.

Back in 2020, I said in my review of the RTX 3080 FE that the card was then, the king of the hill for 4K gaming, and the RTX 2080 Ti before that. In the case of NVIDIA’s GeForce RTX 4080 FE, this card is nothing short of breathtaking in its delivery, both in gaming and productivity but more to the point, it, along with the RTX 4090, is setting the bar for other cards in its class.

Speaking of which, there is also the subject of its direct AMD rivals, the RDNA3-based Radeon RX 7900 XTX and 7900 XT, that its parent company have touted as being the GPUs that will give the RTX 4080 a run for its money, both quite literally and in terms of performance: at launch, the card is expected to retail from RM6300, while the price of Team Red’s two cards starts at US$899 (~RM4085) but it is important to note that, at this time, we still don’t have any tangible data for the latter cards.

Putting all that aside, I can wholeheartedly endorse the RTX 4080 as a significant upgrade for gamers looking to trade up their older RTX 3070, RTX 3070 Ti, or RTX 3080 cards for something more up-to-date. For those of you building a brand new desktop PC, I’ll condense the entire review into these few words: this GPU slaps.

Photography by John Law.