Earlier today, NVIDIA kicked off this year’s GTC event with the announcement of its next-generation Hopper GPU architecture. As with all architectures announced during this period, the GPU maker’s current iteration of HOpper will be used to power its next wave of AI data centres and will succeed the current Ampere architecture that was launched back in 2020.

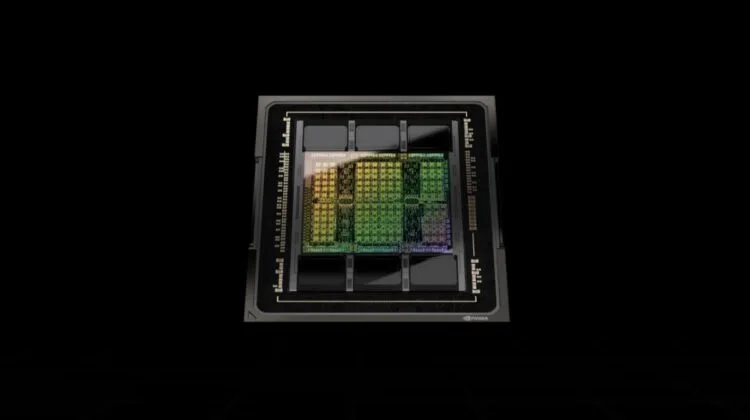

As part of the unveiling, NVIDIA also launched its Hopper-based GPU, the H100 for accelerated computing. Specs-wise, it’s a chip based on TSMC’s 4nm process node and houses 80 billion transistors, making it one of the most densely transistor-packed chips in the current market. Other specifications include a new Transformer Engine that NVIDIA says is six times faster than the previous generation; a 4th generation NVLink that also comes with a new external NVLink Switch, capable of extending the feature as a scale-up network beyond the server, connecting as many as 256 H100 GPUs at nine times higher bandwidth, compared to last generation’s HDR Quantum InfiniBand; a 2nd generation Secure Multi-Instance GPU (MIG) that allows one H100 GPU to be partitioned into seven smaller instances, while increasing their throughput performance by up to seven times over the last generation; and the addition of Confidential Computing for the H100 to protect AI models and customer data while they are being processed.

Of course, it goes without saying that one NVIDIA product that will benefit from the new GPU is the next-generation DGX system. The system will house eight H100 GPUs, delivering a whopping 32 Petaflops of AI performance at new FP8 precision. Each GPU are also connected by the 4th generation NVLink to provide a connectivity bandwidth of 900GB/s, while an external NVLink Switch also allows the system to network with up to 32 DGX H100 units, which is what NVIDIA has done with its SuperPOD supercomputers, such as EOS, the brand’s most advanced supercomputer, to-date.

The NVIDIA H100 GPU will be available to clients beginning from the third quarter of this year.

(Source: NVIDIA)