Apple has delayed the release of its upcoming child sexual abuse material (CSAM) detection that was announced less than a month ago. The measure is part of a new suite of child protection features that the company plans on rolling out in the upcoming iOS 15, iPadOS 15, and macOS Monterey updates that are expected to arrive some time this fall.

“Last month we announced plans for features intended to help protect children from predators who use communication tools to recruit and exploit them, and limit the spread of Child Sexual Abuse Material,” Apple said in a media statement. “Based on feedback from customers, advocacy groups, researchers and others, we have decided to take additional time over the coming months to collect input and make improvements before releasing these critically important child safety features.”

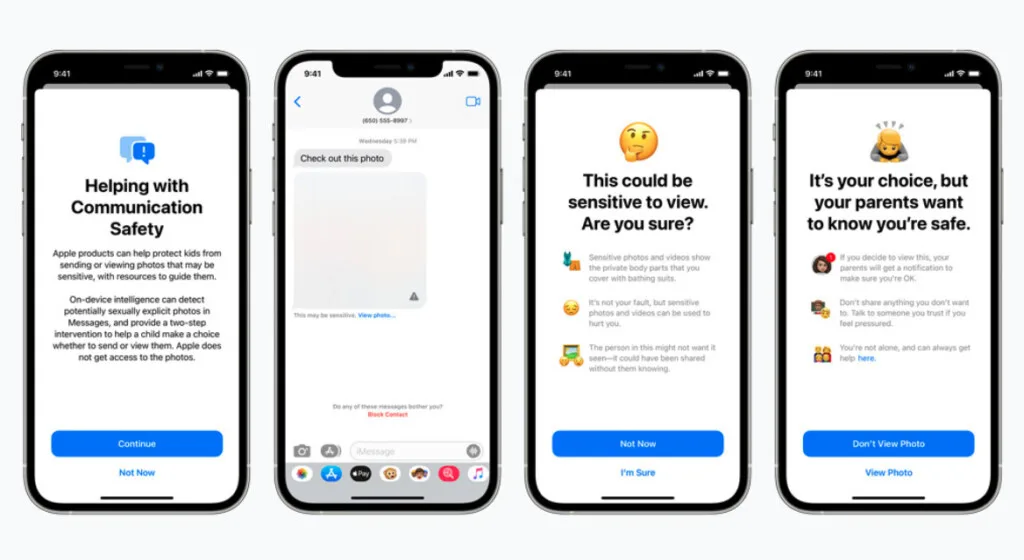

The release detailed three major changes set on improving detection of CSAM involving iCloud Photos, Search, and Siri. According to Apple, the features use NeuralHash technology that is designed to identify known CSAM on a user’s device by comparing its hashes, and only reports to apple if it passes a threshold of 30 images. This happens on-device instead of in the cloud, which Apple assures is still privacy-friendly as they have no access to the encrypted data. It was also revealed that CSAM scanning is already being done on iCloud Mail since 2019.

The CSAM detection received negative backlash from security experts and privacy advocates, including the Electronic Frontier Foundation and the American Civil Liberties Union, over concerns that it could be susceptible to abuse and possibly lead to backdoor access in the near future. Craig Federighi, Apple’s senior vice president of software engineering, defended the new system by claiming that it is an advancement in privacy and blames the public backlash on miscommunication, saying that “messages got jumbled pretty badly.”

A leaked internal memo revealed that Apple is aware that “some people have misunderstandings” about how CSAM scanning works. It also contained a message from the National Center for Missing and Exploited Children (NCMEC) that distilled critics of the move as “the screeching voices of the minority.” NCMEC is Apple’s partner on the measure and is in charge of manually sifting through and confirming any reported potential CSAM.

Following the announcement of the delay, digital rights group Fight for the Future responded by insisting that “there is no safe way to do what [Apple] are proposing.” Director Evan Greer calls the technology malware that can be easily abused to do enormous harm, and that it would lead to surveillance on Apple products.

(Source: TechCrunch, 9to5mac [1][2], Macobserver, Arstechnica)