Meta has introduced a set of new safeguards for teenage users in Malaysia through its Teen Accounts feature across Facebook, Instagram, and Messenger. The measures were outlined during a recent media roundtable organised by the company, where it highlighted its efforts to improve online safety for younger users.

The move comes as Malaysia moves towards stricter rules on youth access to social media. Current government recommendations state that children under 16 should not open personal social media accounts and may only use accounts managed by their parents.

Teen Accounts Enabled By Default

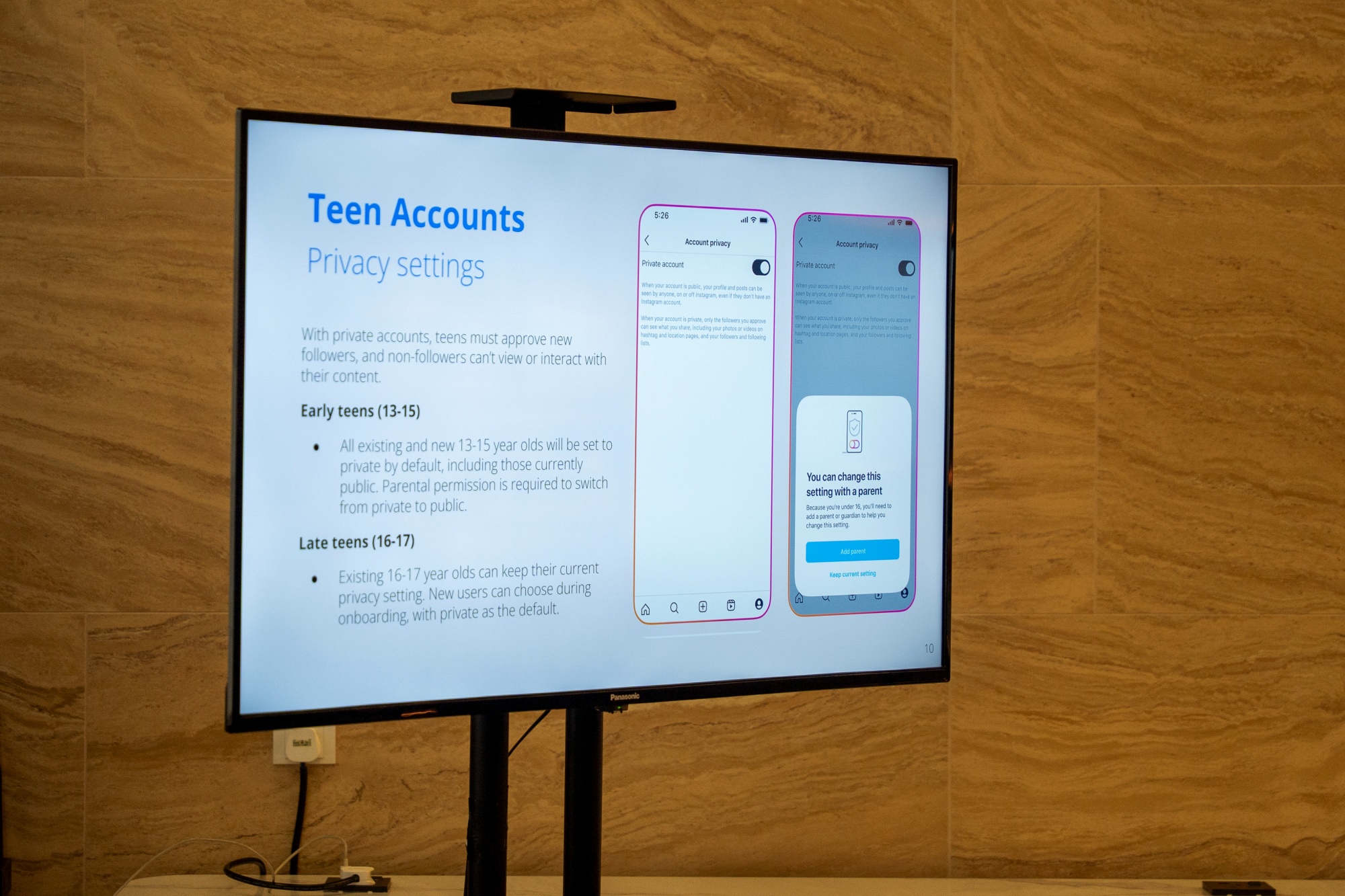

According to Meta, all users aged between 13 and 17 are automatically placed under Teen Accounts. These accounts are configured with stricter privacy protections by default, with additional safeguards applied to users aged 13 to 15.

For teens in this younger group, any changes to privacy settings require parental approval. Meta says 97% of these users keep the default settings, suggesting that the safeguards are effective in reducing exposure to potential risks.

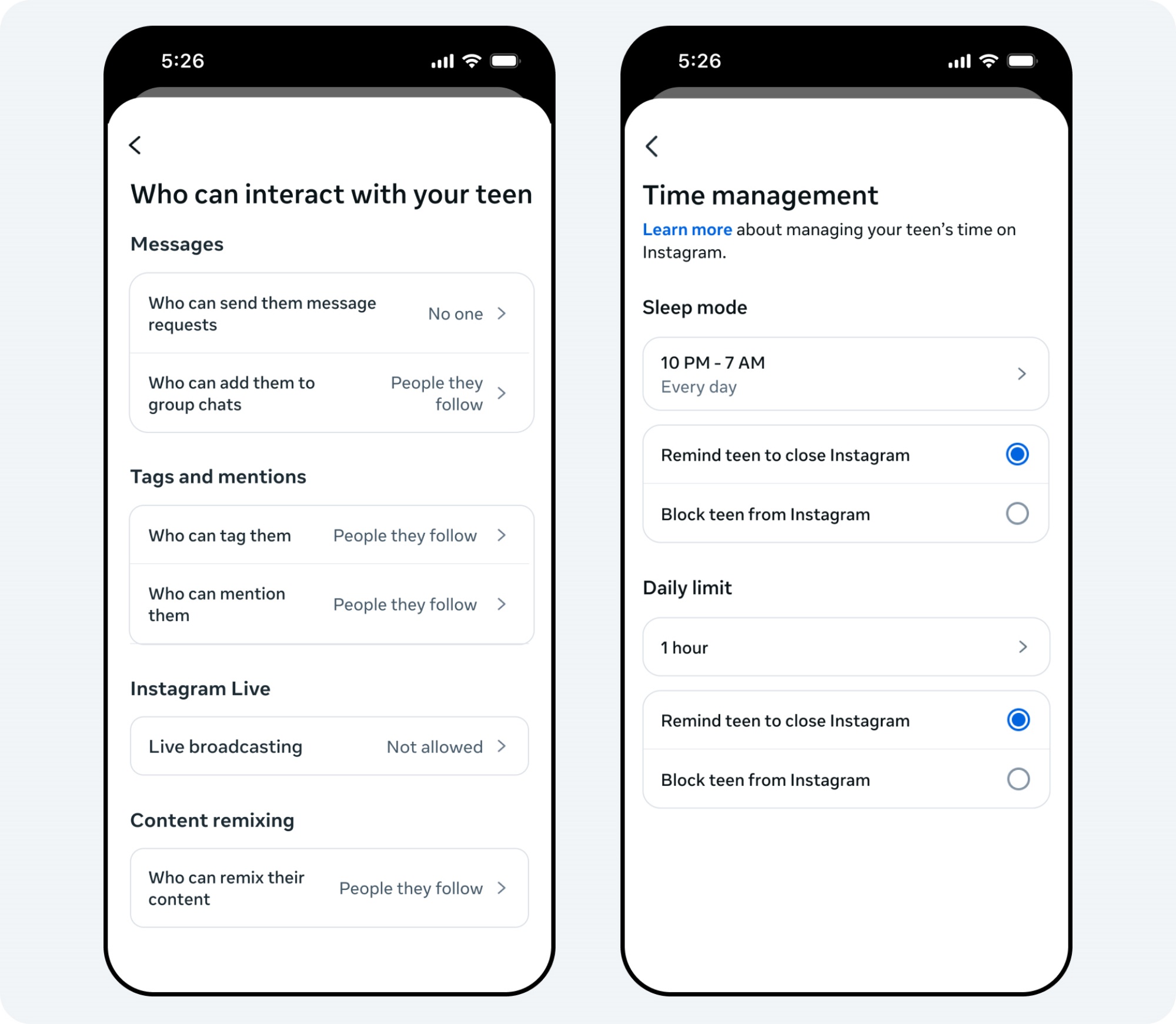

Teen accounts are also automatically set to private. They can only receive messages from people they follow or are already connected with, while requests from strangers are blocked.

Screen Time Limits And Night-Time Restrictions

Meta has also implemented several usage-related controls aimed at promoting healthier digital habits among teenagers. Teen users will receive reminders prompting them to close the app once they reach one hour of daily usage.

In addition, notifications will automatically be muted between 10pm and 7am through a built-in sleep mode feature. This is intended to reduce late-night usage and minimise disruptions during rest hours. Teen accounts are also restricted from creating live broadcasts or viewing streams that have been flagged as not being age appropriate.

Content Filters And Safety Indicators

To reduce exposure to harmful content, Meta applies automatic filtering on material shown to teen users. The company says its algorithm removes or limits content containing words, terms, or themes deemed unsuitable for younger audiences.

Additional safety indicators are also displayed during interactions with other users. These include contextual information such as the month and year an account joined the platform, along with safety tips designed to help teens identify suspicious accounts or potential scams. A profanity filter is also enabled by default to block obscene language and symbols.

Parental Control And Family Centre Tools

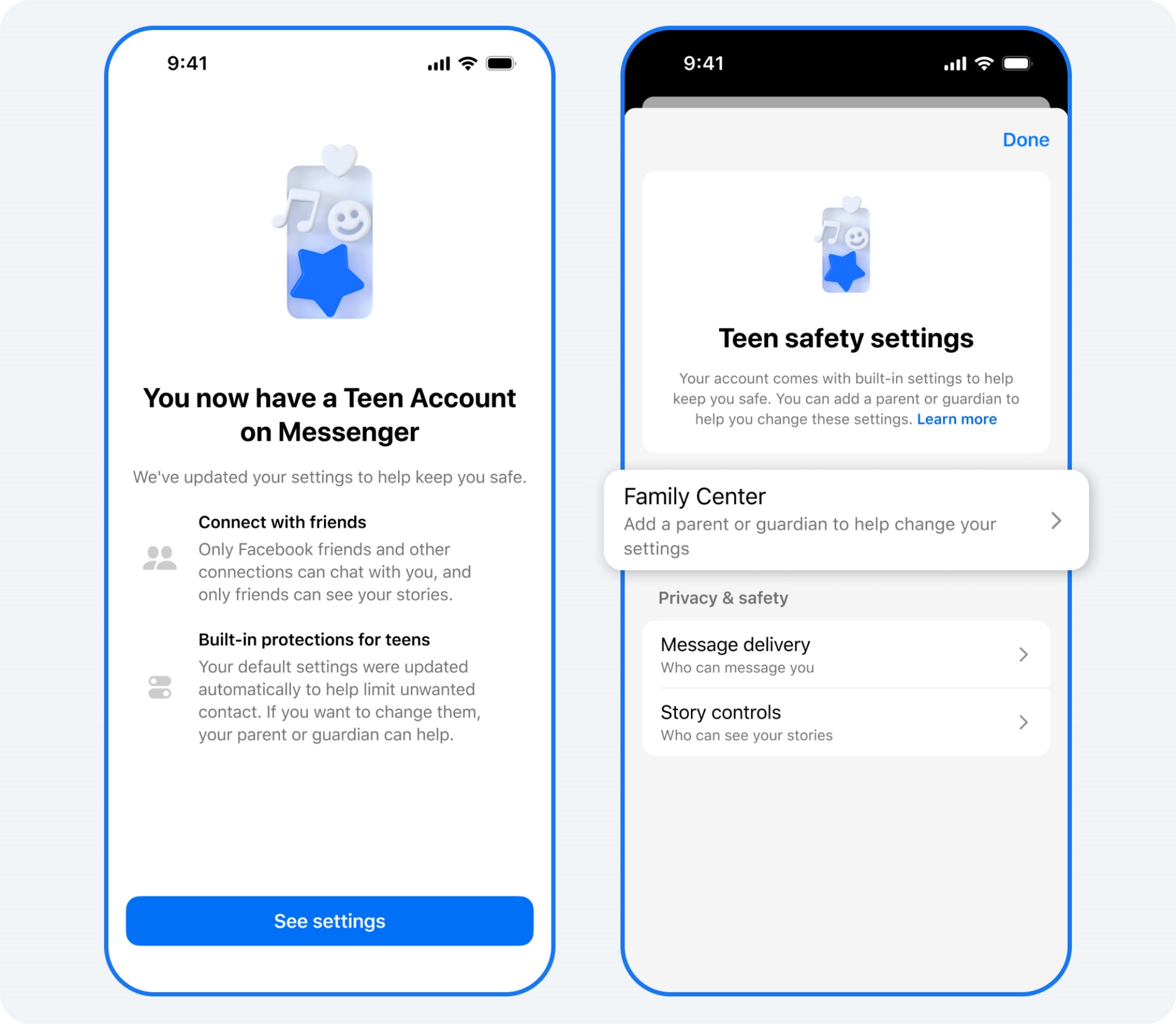

Parents can manage their children’s accounts through Meta’s Family Centre hub, which consolidates parental controls and monitoring tools in one place. To grant approvals or modify restrictions, parents must register their own accounts as linked parent profiles.

Through the hub, parents can enforce blocking rules, adjust usage limits, and manage account access more directly. The system also allows them to review certain privacy settings and approve requested changes.

Age Verification And Underage Detection

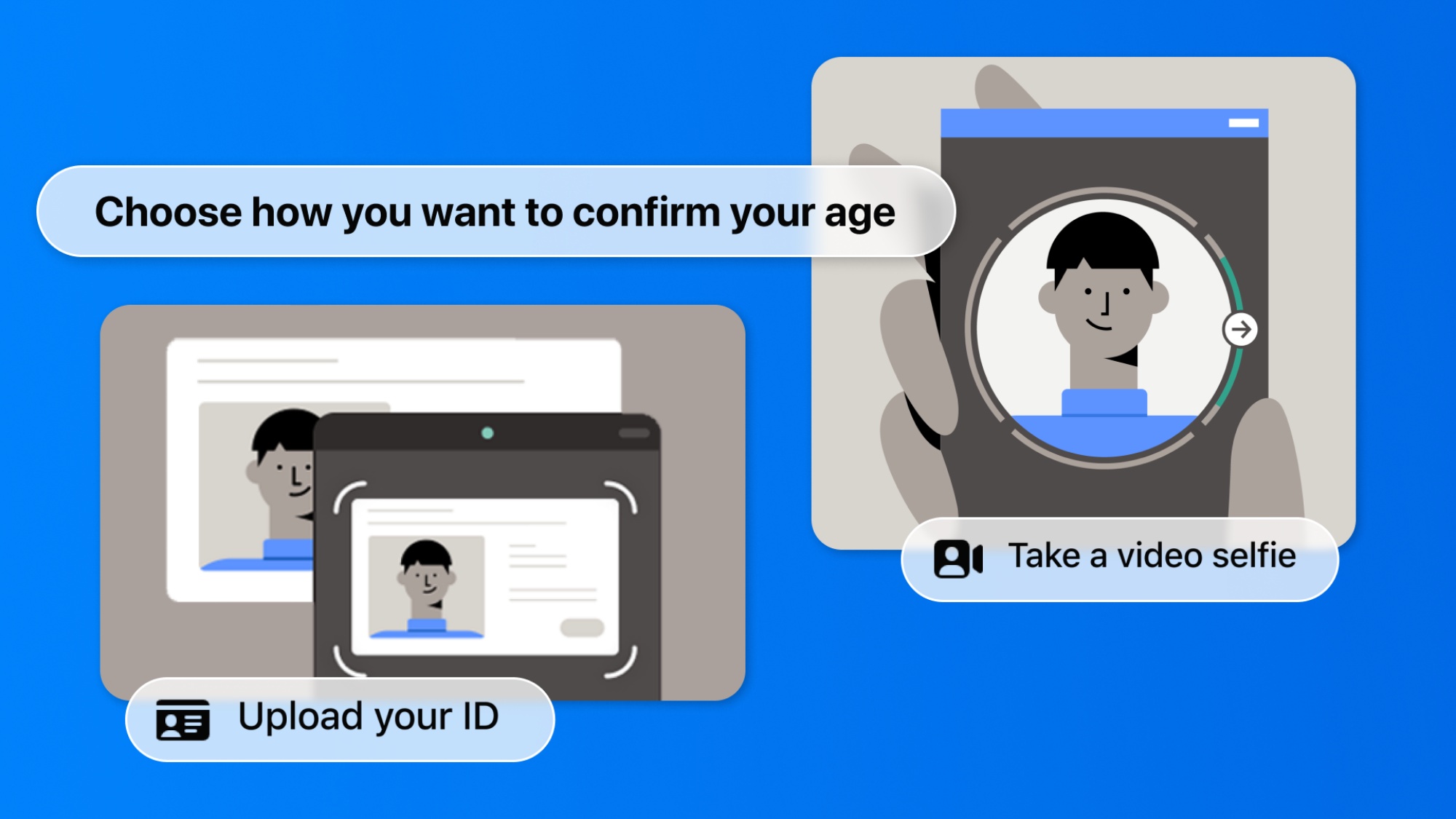

Meta said it uses a layered approach to detect underage users on its platforms. This includes age checks during registration, reports from other users, manual content review, and artificial intelligence tools that analyse account behaviour.

Accounts suspected of belonging to teenagers can be reclassified into teen accounts after review. Meanwhile, accounts believed to be owned by children under 13 may be removed entirely. Verification methods may also include identification documents or other forms of age confirmation when necessary.

Advertising Restrictions For Teens

The company also reiterated that teenagers under the age of 18 cannot be targeted with advertisements based on their interests or personal data. Instead, ads served to teen users are limited to broader factors such as age and general location.

Meta says this approach aligns the advertising experience with age-based content guidelines similar to those used in film classification systems.

Working With Regulators

It also pointed to age verification initiatives implemented in countries such as Brazil and several US states as possible models for strengthening protections. According to Meta, its goal is to ensure that safety measures are applied by default so that teens can use digital platforms more securely while reducing the burden on parents to manually configure protections.