Ever since the recent surge in AI development, concerns about data centres have also come into sharper focus. These facilities consume significant amounts of energy and water, raising questions about their long-term environmental impact. Perhaps in a bid to quell these worries without hindering expansion, some companies have begun exploring more unconventional ideas, including the possibility of moving compute infrastructure into space. While the concept is still largely experimental, companies like AMD are already discussing how AI workloads could eventually extend beyond Earth, although the idea still faces several technical challenges.

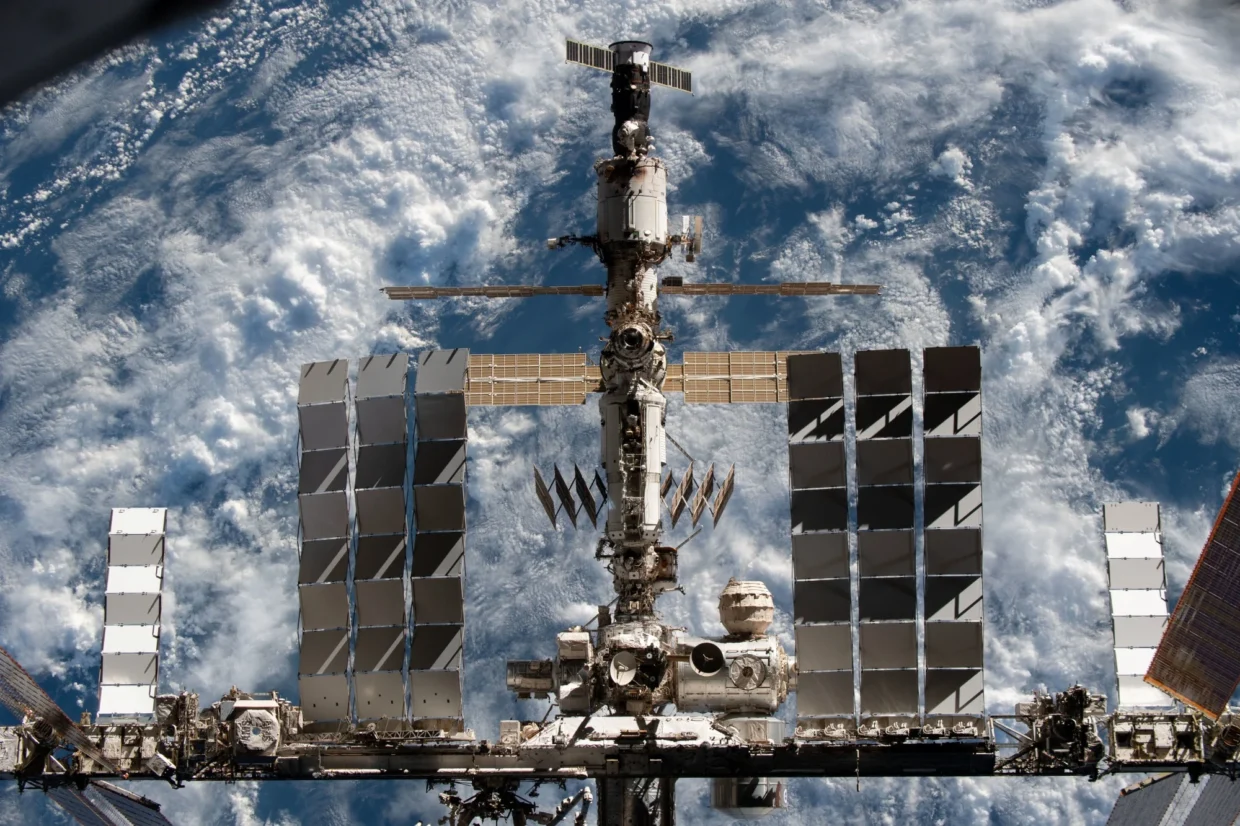

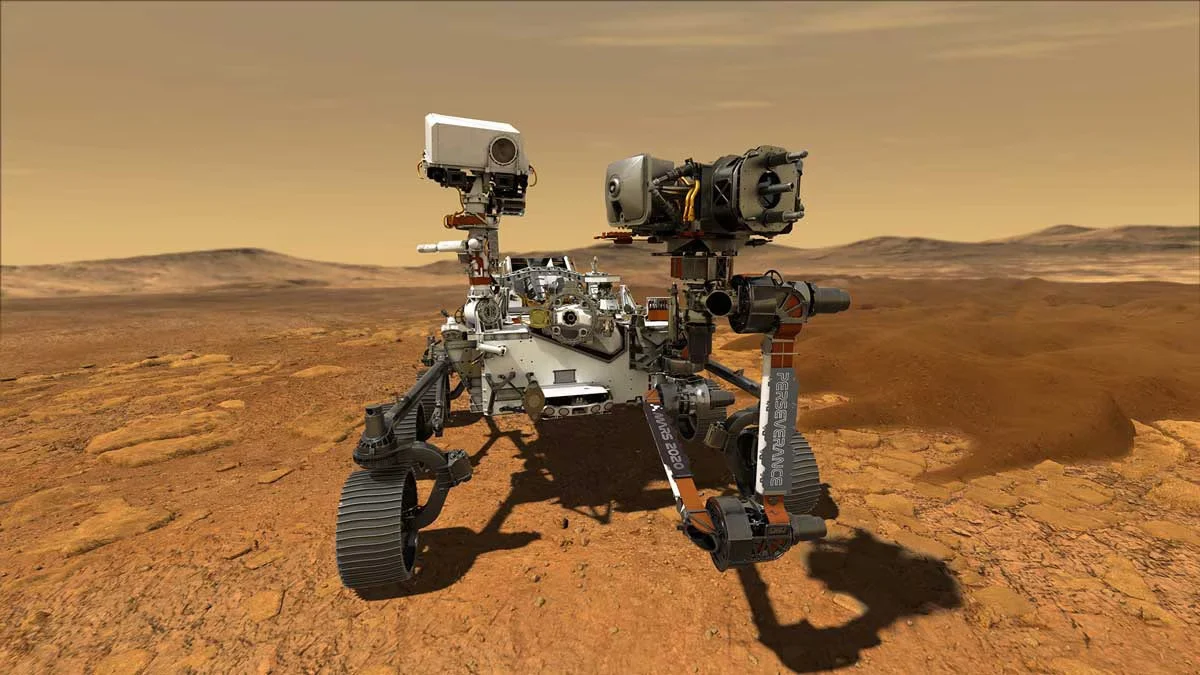

In a recent blog post, Mark Papermaster, CTO of AMD, describes space as the “ultimate edge environment”, where systems have to operate with limited power, restricted connectivity, and long communication delays with Earth. As such, satellites and spacecraft cannot rely on constant links to ground stations, making onboard processing not just beneficial, but essential.

Why Space?

Managing data centres on Earth is already challenging, and the problem becomes even more complex in space. The blog explains that downlink is limited by bandwidth, power, and communication windows, which makes sending all data back to Earth slow and inefficient. This raises the question of why companies are exploring space-based compute in the first place and why AMD is discussing this direction.

According to Papermaster, edge processing allows spacecraft and satellites to interpret data locally and act on it directly. Engineers can also adapt this AI across different use cases depending on mission needs. He further highlights growing interest in orbital compute, driven by the “insatiable” demand for AI processing. There have been attempts to explore large-scale computing in space, partly to leverage abundant solar energy and the thermal advantages of operating in a vacuum. However, the concept still comes with significant challenges.

Challenges Of Orbital Data Centres

As noted in the blog, “large-scale orbital compute will ultimately be limited by power, thermal dissipation, radiation resilience, and communications.” Papermaster also highlights concepts such as sun-synchronous orbits, where satellites maintain consistent lighting conditions to maximise solar energy availability while reducing temperature fluctuations. Even so, heat dissipation remains a major challenge. Without air in space to carry heat away, these systems would have to rely on radiators to regulate temperature.

However, he views these constraints in a more positive light. “This unique constraint transforms performance-per-watt from a metric into a mandate that drives the architectural innovations making massive-scale AI in orbit a reality,” he says.

That said, at a meaningful scale, the design approach shifts towards modular, serviceable systems rather than what Papermaster refers to as “data centres in a box”. At larger scales, these modular deployments could potentially grow towards multimegawatt-class capabilities over time. They would also require high-speed, low-latency interconnects between individual modules to function as a cohesive system.

In addition, reliability and serviceability become key considerations. Engineers expect these modules to have finite operational lifetimes, which means they will need to periodically de-orbit and replace them. In this model, the system behaves more like a managed fleet rather than a single long-lived installation.

Making Space “AI Buildable”

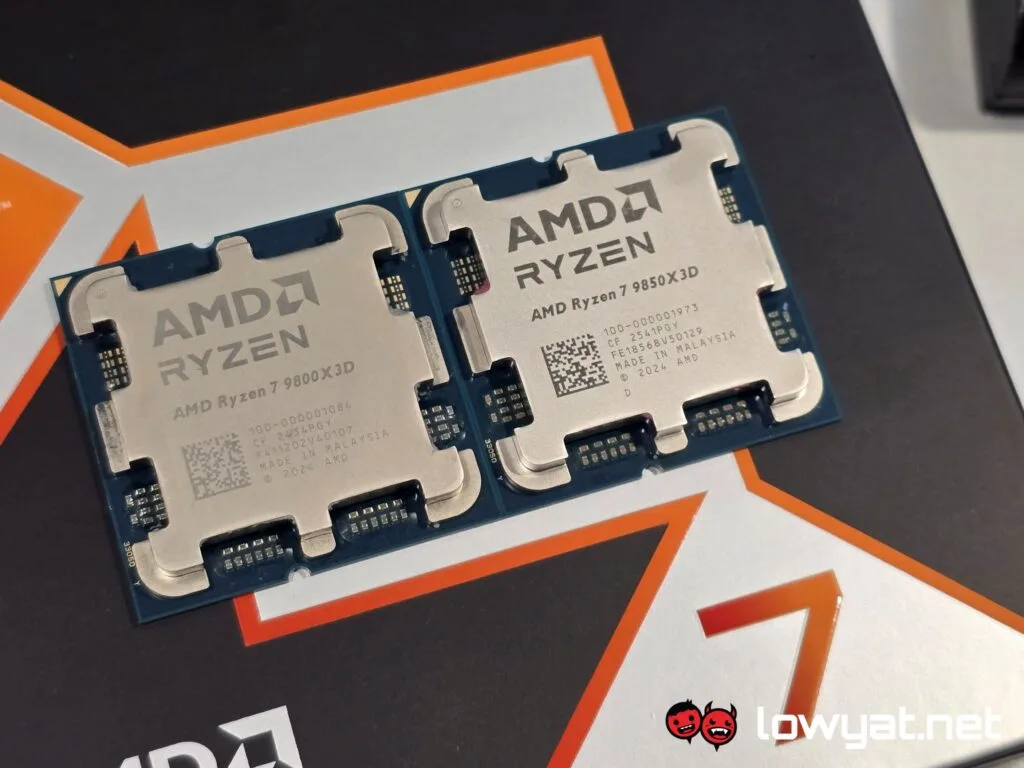

With all that said, AMD is exploring approaches that could make AI in space more practical. Rather than treating it as a one-off deployment, the company describes a more repeatable platform-based approach.

This begins with scalable compute building blocks that match the mission requirements in size and capability. AMD highlights a mix of CPUs, GPUs, and FPGAs, along with other accelerator options, all within a modular design philosophy aimed at supporting different workloads in space environments.

This approach essentially extends AMD’s existing edge computing strategy into space. By maintaining the same platform consistency used in ground-based deployments, the company claims that it allows partners to build on a repeatable foundation and scale their capabilities over time without needing to redesign everything from scratch.

Additionally, since space missions are complex, AMD places strong emphasis on “openness” by promoting the use of its ROCm open software stack. This allows developers to optimise and validate systems across different hardware setups, avoiding proprietary lock-in while encouraging a more flexible and collaborative development environment for space applications.

AI is increasingly moving into environments where computing must operate under tight constraints, whether in factories, hospitals, vehicles, or even space. This shift brings processing closer to where data is generated, reduces latency, saves bandwidth, and improves overall efficiency in mission-critical systems. In that sense, people now view space as the latest frontier for edge computing, rather than a complete departure from how engineers already design and deploy these systems on Earth.

(Source: AMD)