Samsung Electronics has recently announced that it has started mass producing HBM2 (High Bandwidth Memory) DRAMs. The HBM2 variant that is currently being produced by Samsung is the 4GB DRAM version. Interestingly, it’s the first 4GB DRAM package to utilise HBM2 technology. According to Samsung, HBM2 would be used in areas of high performance computing, advanced graphics and enterprise usage.

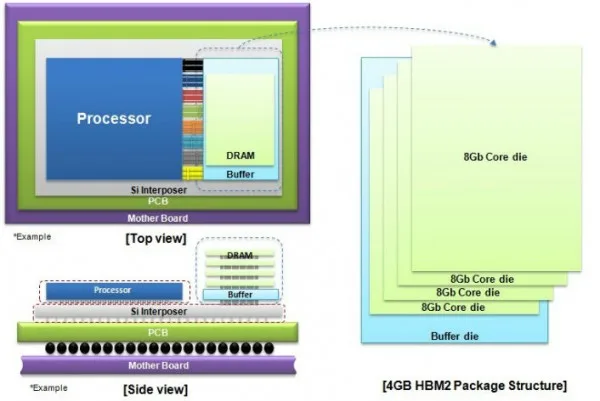

The 4GB HBM2 DRAM manufactured by Samsung will be made using its 20nm process technology, which will enable the HBM2 DRAM chip to outperform previous generation DRAMs in terms of efficiency. Samsung mentioned that the HBM2 memory uses four 8Gb (gigabit) core dies stacked on top of a buffer die. The stack is then interconnected via TSV (Through Silicon Via) holes and “microbumps”. It’s worth mentioning that 8-gigabit equals to 1-gigabyte, hence, 32-gigabit (4×8 Core die stacks) equals to the 4-gigabyte HBM2 variant.

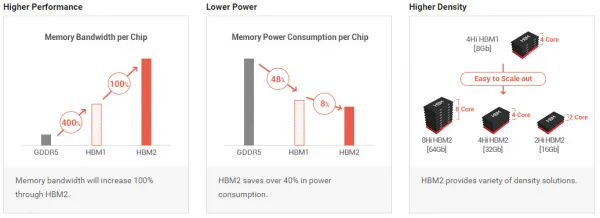

When compared against first generation HBM technology, HBM2 has twice the bandwidth. To be specific, HBM1 had a maximum bandwidth of 128GB/s while HBM2 has an impressive 256GB/s maximum bandwidth. Compared against the more commonly used GDDR5, HBM2 has seven times more bandwidth.

Knowing this, two stacks of the 4GB HBM2 DRAM would equal to 8GB of VRAM with bandwidth of 512GB/s, which is also equivalent to the bandwidth of AMD’s R9 Fury X. Samsung mentioned that with the current 4GB HBM2 DRAM variant, users can achieve up to 24GB HBM2 DRAM stacks that has a mind-blowing 1.5TB/s bandwidth; that’s almost five GTX Titan X worth of memory bandwidth.

Samsung plans to further expand its HBM2 variant by introducing an 8GB variant with higher maximum bandwidth sometime this year. Interestingly, HBM2 implementation on upcoming GPUs by AMD (Polaris) and Nvidia (Pascal) is entirely possible. It’s enlightening to see how far GPU technology has come since it was first – arguably – introduced in 1999 by Nvidia with the GeForce 256. Details of the HBM2 technology can be found here.

(Source: Samsung)